ChatGPT-5 Still Hallucinates: OpenAI Explains Why

.png)

1. Introduction

Generative AI tools have improved rapidly in recent years. Systems like ChatGPT now assist with research, writing, and customer support across many industries. But one long-standing issue remains: hallucinations.

Hallucinations occur when an AI system generates information that sounds accurate but is actually incorrect. Recent AI safety research OpenAI highlights that ChatGPT-5 hallucinations still appear even in newer models. Researchers say the problem may stem less from technical errors and more from how these systems are trained—often encouraging plausible answers rather than uncertainty.

2. A Problem Hiding in Plain Sight

People had been joking about it for a while — the chatbot “making things up.” The joke stuck because it kept happening. Ask a question and the system might respond with a neat, confident explanation… except the details aren’t real.

This is what researchers call hallucination. The AI produces text that sounds perfectly reasonable but isn’t actually factual.

Sometimes the mistakes are small. A nonexistent book title. A misquoted study. But other cases have been more serious. In 2023, a New York lawyer submitted a court brief that cited several cases generated by ChatGPT. None of them existed. The court later sanctioned the attorney after discovering the citations were fabricated.

That example made headlines, but it wasn’t an isolated event. Situations like this show why AI makes things up can be harder to notice than people expect. The language sounds confident, and to many readers, it looks just like a normal answer.

3. OpenAI’s Experiment: Confident, Wrong, and Repeated

To better understand the issue, researchers from AI safety research OpenAI ran a simple test. Instead of complex technical benchmarks, they asked the model straightforward factual questions about a well-known computer scientist, Adam Tauman Kalai.

One question was about the title of his PhD dissertation. The model did not say it was unsure. Instead, it generated a title that sounded plausible—but it was incorrect. When the researchers asked again, the system produced a different title. That one was wrong too.

A similar pattern appeared when the model was asked about Kalai’s birthday. Again, the system offered confident answers. And again, the dates were inaccurate.

The experiment illustrated a broader pattern behind ChatGPT-5 hallucinations. When the system lacks reliable information, it often tries to produce a reasonable-sounding guess rather than simply saying “it doesn’t know”..

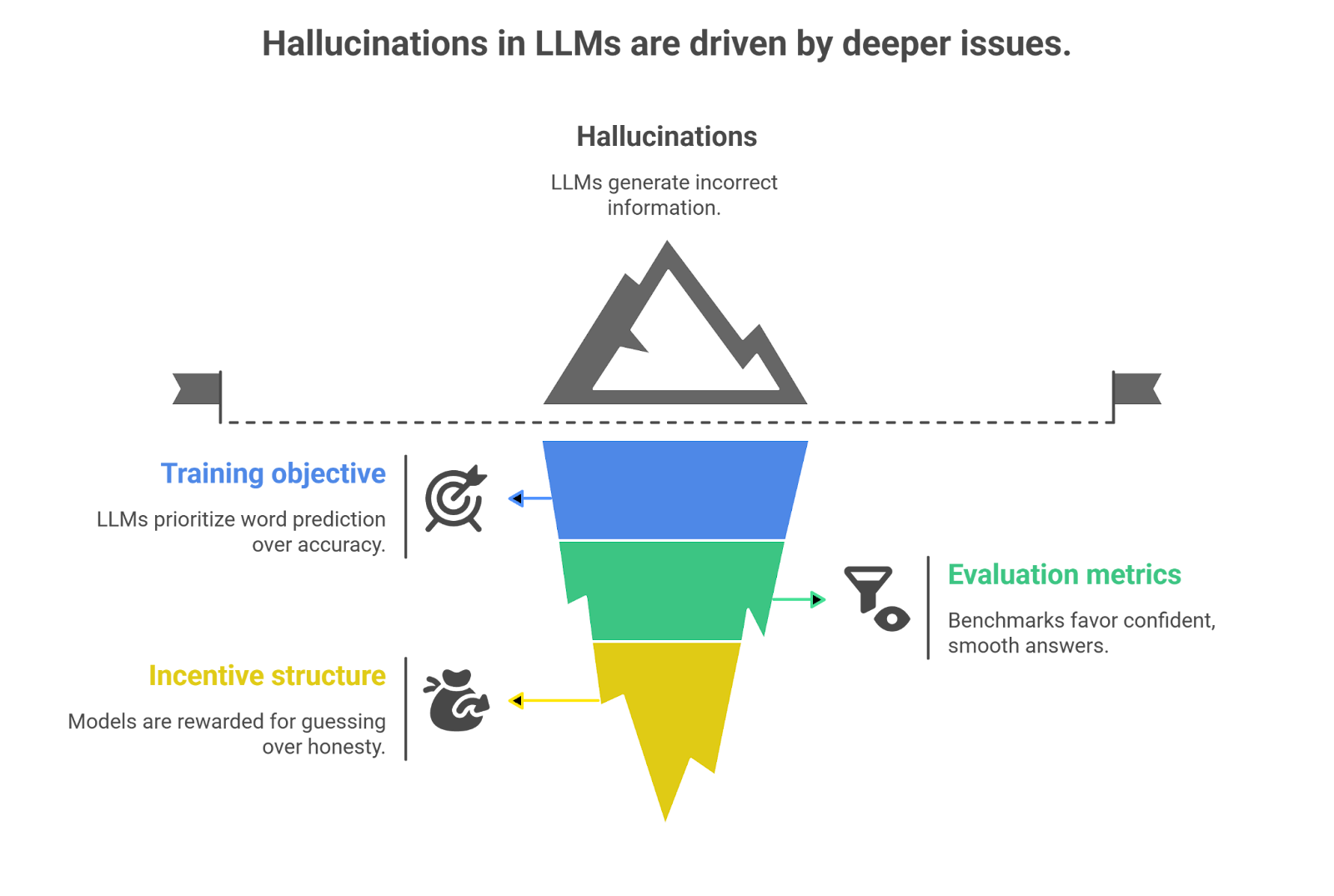

4. Why Hallucinations Persist

If hallucinations are well known, why haven’t they disappeared? The explanation, according to AI safety research OpenAI, lies in how large language models are built and evaluated.

First, the training objective focuses on predicting the next word in a sentence. The system learns patterns of language, not the truth of the "information" itself. A sentence that sounds believable can still be incorrect.

Second, evaluation methods often "reward fluent responses". During testing, answers that read smoothly tend to score higher than replies that simply say “I don’t know.” Over time, this encourages models to keep producing answers rather than admitting uncertainty.

There is also an incentive problem. From the model’s perspective, a confident guess may receive better feedback than silence. Gradually, this behavior leads to the AI overconfidence issue that users recognize as hallucination.

5. The Stakes for Businesses and End Users

At first glance, a chatbot getting something wrong doesn’t seem like a big deal. It happens. People shrug and move on. But the story changes once AI starts showing up inside real products and services.

Picture a healthcare assistant giving advice about symptoms. If the information is off—even slightly—the consequences could reach far beyond a confusing answer. Now think about legal work. Accuracy matters there in a very literal way. Courts expect real citations, real cases. The moment a system invents one, credibility disappears.

Finance brings another layer of risk. A customer asking about loan conditions or account rules might receive details that sound perfectly "reasonable"… except they were never part of the policy.. The same thing can happen in customer support when automated systems promise refunds or benefits that the company never intended to offer.

This is why discussions around ChatGPT hallucinations have become more serious inside organizations. What once looked like a quirky AI behavior now raises a practical question: if a system sounds "confident" but can still be wrong, how much should people rely on it?/

6. Industry Reactions

Some engineers now argue that systems should be rewarded for admitting uncertainty instead of filling gaps with guesses. As one developer put it during the discussion, the goal should not just be intelligence, but a kind of “humility” in how models respond.

Regulators are also paying attention. In Europe, the EU AI Act already requires transparency for high-risk AI systems, especially in areas such as healthcare, finance, and legal services. Hallucinations could fall directly into that category.

Businesses experimenting with generative AI are watching closely as well. Many companies see the potential of the technology, but the AI overconfidence issue remains a major concern when these systems are used in real-world decision making.

7. Conclusion

So where does this leave the industry?

For years, progress in generative AI was measured mostly by how natural the responses sounded. If the system produced fluent text, that was often considered a success. But the conversation is slowly shifting. Researchers are now paying closer attention to accuracy and reliability.

The recent findings from AI safety research OpenAI highlight that ChatGPT-5 hallucinations are not simply random glitches. They grow out of the way language models are trained and evaluated. In other words, the system sometimes learns that sounding confident is more useful than admitting uncertainty.

For businesses and everyday users, that insight matters. AI tools can still be valuable, but their answers should not automatically be treated as facts. Until the technology improves further, a careful approach, checking “important information”” rather than trusting the first response, remains the safest way to use these systems.

.svg)

.png)

.png)