AI red teaming in agriculture & AgTech : Securing Crop Prediction, Yield Optimization, and Autonomous Farming AI

.png)

Key Takeaways

Data is the biggest risk in farming AI

Small errors can impact big decisions

AI already drives key farm operations

Red teaming exposes hidden weaknesses

Secure AI to protect farm outcomes

1. When Farming AI Goes Wrong

AI Is Quietly Running More Farm Decisions .. Walk through a modern large farm operation and you’ll notice something interesting—many of the important decisions are no longer purely human. Software forecasts crop yield months in advance. Drone imagery highlights stressed crops before farmers can see it from the ground. Soil sensors stream constant data into predictive models that recommend irrigation or fertilizer levels.

These systems save time and increase efficiency. They also influence planting schedules, budgeting, and harvest expectations long before the first crop is collected.

The Weak Spot: Data

But agricultural AI has a fragile dependency—data.

Weather feeds, satellite imagery, sensor networks, and historical crop datasets all flow into these models. If something in that pipeline changes—bad readings, manipulated inputs, corrupted records—the predictions can quietly drift away from reality.

And the system usually doesn’t raise a flag.

Small Changes, Big Impact

Security researchers studying machine-learning systems have demonstrated something worrying: tiny modifications to input data can shift model predictions significantly. Adjust a few environmental variables or tweak satellite imagery slightly, and yield forecasts can move in the wrong direction.

In large agricultural planning systems, even small prediction errors can ripple through supply forecasts and commodity planning.

Why Testing Matters

This is exactly why AI red teaming in agriculture is becoming important.

Instead of assuming the model will behave correctly, security teams intentionally try to break it—manipulating datasets, testing "unusual" scenarios, and probing model behavior. The goal isn’t to damage the system. It’s to find weaknesses before someone else does.

2. Where AI Is Already Used in Agriculture

AI tools have slipped into farming faster than most people realize. Not as flashy robots everywhere—but as quiet prediction engines running in the background of everyday farm decisions.

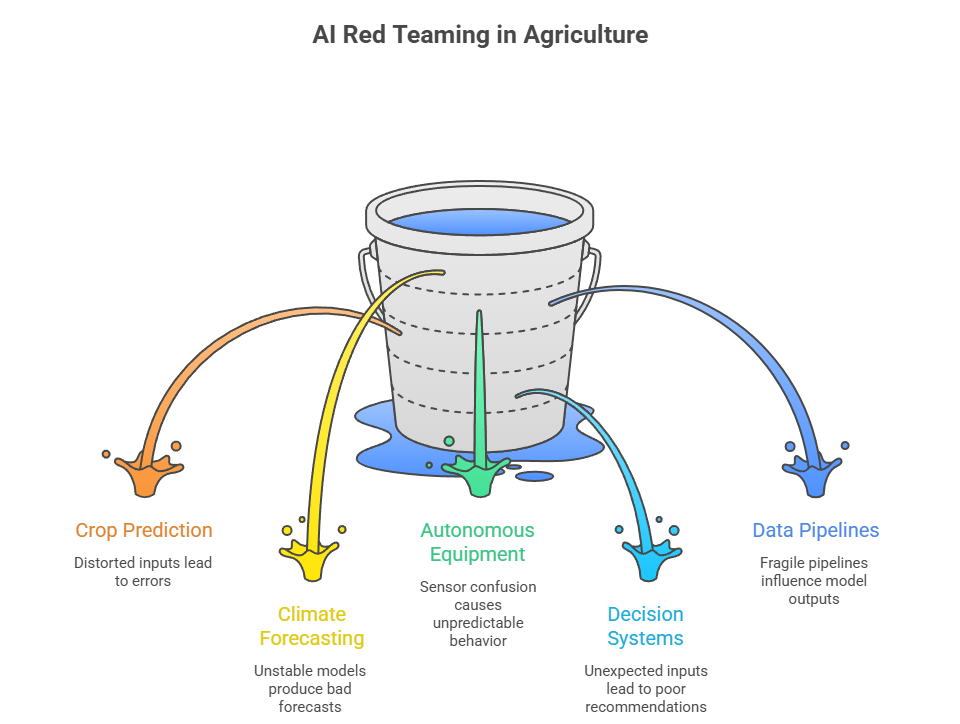

Crop Yield Forecasting Systems

Crop forecasting models try to answer a simple question: how much will this field produce?

To estimate that, the systems pull together weather history, soil data, satellite imagery, and past harvest records. The model looks for patterns humans would miss.

But here's the catch. When the underlying data shifts—even slightly—the predictions can drift. That’s where crop prediction AI vulnerabilities start showing up.

Climate Forecasting for Farm Planning

Farmers have always watched the weather. Now algorithms do it with them.

Many platforms run climate prediction AI models that simulate rainfall trends, drought probability, and seasonal temperature shifts. These outputs shape irrigation plans and crop choices.

If the model misreads climate signals, the farm may plan an entire season around the wrong forecast.

Automation Through Agricultural Robotics

Then there’s the machinery.

Self-driving tractors, crop-monitoring drones, and robotic harvesters rely on farm robotics AI to navigate fields and identify plants or obstacles.

Most of the time it works smoothly. But if sensors misread the environment—or if the AI model behaves unexpectedly—the machine may react in ways operators didn’t anticipate.

And in agriculture, small operational errors can quickly become expensive ones.

3. How AI Red Teams Test Agricultural Systems

AI red teaming looks at agricultural AI the same way an attacker would. Instead of assuming the model behaves correctly, testers deliberately push it into unusual situations—messy data, strange inputs, broken sensors.

That’s often where problems appear.

3.1 Manipulating Crop Prediction Models

Crop forecasting systems depend on huge environmental datasets. Weather readings, soil measurements, satellite images—everything feeds into the model.

A red team may try altering those inputs. Maybe rainfall records are slightly distorted. Maybe satellite imagery is modified before reaching the prediction pipeline.

The model still produces an answer. The problem is that the answer can now be wrong, exposing crop prediction AI vulnerabilities that normal testing never reveals.

3.2 Stress Testing Climate Forecasting Models

Long-term planning tools rely heavily on climate prediction AI models. These systems estimate rainfall patterns, drought probability, or seasonal temperature shifts.

Red teams push these models into uncomfortable territory—unusual weather sequences, incomplete datasets, or conflicting environmental signals.

Sometimes the model becomes unstable. Sometimes it confidently produces forecasts that don’t make sense.

Neither outcome is great for agricultural planning.

3.3 Probing Autonomous Farm Equipment

Autonomous equipment adds another layer of complexity.

Self-driving tractors and robotic harvesters rely on navigation models and sensor data. When testers simulate GPS spoofing or sensor confusion, the machines can behave unpredictably.

That’s where autonomous farming AI risks begin to surface.

Even small navigation errors may "disrupt planting patterns" or harvesting routes.

3.4 Breaking Agricultural Decision Systems

Some agricultural platforms recommend irrigation schedules, fertilizer levels, or pest control strategies.

Red teams test what happens when the inputs change "unexpectedly". For example, crop disease data might be incomplete or sensor readings might conflict.

If the system reacts poorly, farmers could receive recommendations that waste resources—or damage crops.

3.5 Attacking Agricultural Data Pipelines

Most farming AI systems rely on long data pipelines connecting sensors, satellites, and cloud models.

These pipelines are surprisingly fragile.

Spoofed IoT sensors, corrupted environmental feeds, or altered datasets can quietly influence model outputs. Without "proper monitoring", the AI system may never realize something went wrong.

This is where AgTech AI security becomes a serious concern.

4. How Agricultural AI Systems Actually Break

When agricultural AI fails, it usually doesn’t look dramatic. No flashing error messages. No obvious alarms.

The system simply keeps running… while the predictions quietly drift in the wrong direction.

Data Poisoning in Agricultural Datasets

Crop forecasting models learn from historical agricultural data. Weather records, soil measurements, satellite imagery—years of information feed into the training process.

Now imagine a small portion of that data being altered.

Maybe rainfall levels are slightly exaggerated. Maybe yield records are modified before reaching the dataset.

The model absorbs those patterns during training. Later, when it predicts harvest outcomes, the forecasts start shifting. That’s one of the more subtle crop prediction AI vulnerabilities researchers worry about.

Sensor Spoofing in Smart Farming Systems

Agricultural AI depends heavily on sensors scattered across fields.

They measure moisture, temperature, nutrient levels. The models trust those signals.

But sensors can lie.

If incorrect readings enter the pipeline, the AI system may assume soil conditions have changed. Suddenly irrigation systems activate—or fertilizer recommendations adjust—based on data that was never real.

Vision Model Failures in Autonomous Equipment

Machines operating in fields depend on visual recognition. Drones scanning crops. Harvest robots identifying produce. Navigation systems detecting obstacles.

Small visual disturbances—dust, lighting shifts, altered imagery—can confuse those models.

When that happens, weaknesses in AgTech AI security become visible very quickly.

5. Conclusion

Agriculture is becoming deeply dependent on AI systems. Crop forecasting tools influence planting decisions. Climate models shape irrigation planning. Autonomous machines are beginning to handle field work that once required large crews.

All of this creates efficiency—but also new points of failure.

If prediction models learn from corrupted data or automated equipment reacts to misleading signals, the consequences may ripple through entire farming operations. These risks are why AI red teaming in agriculture is gaining attention.

Treating agricultural AI as critical infrastructure changes how it should be tested. Not just for software bugs, but for adversarial behavior, unexpected inputs, and fragile data pipelines.

Because the future of farming will depend on whether these systems can be trusted.

“Small data errors in AI can lead to big farming mistakes.”

.svg)

.png)

.svg)

.png)

.png)