AI Red Teaming in Defense & Military: Testing Autonomous Systems, Intelligence Analysis, and Decision Support AI

.png)

Key Takeaways

1. Introduction

A satellite passes over a conflict zone and captures thousands of images in a single hour. No analyst can review that volume manually. So AI models do the first pass—flagging vehicles, tracking movement patterns, highlighting anything unusual. Similar systems help monitor drone feeds and radar signals. The scale is enormous.

Now imagine that system misreading what it sees.

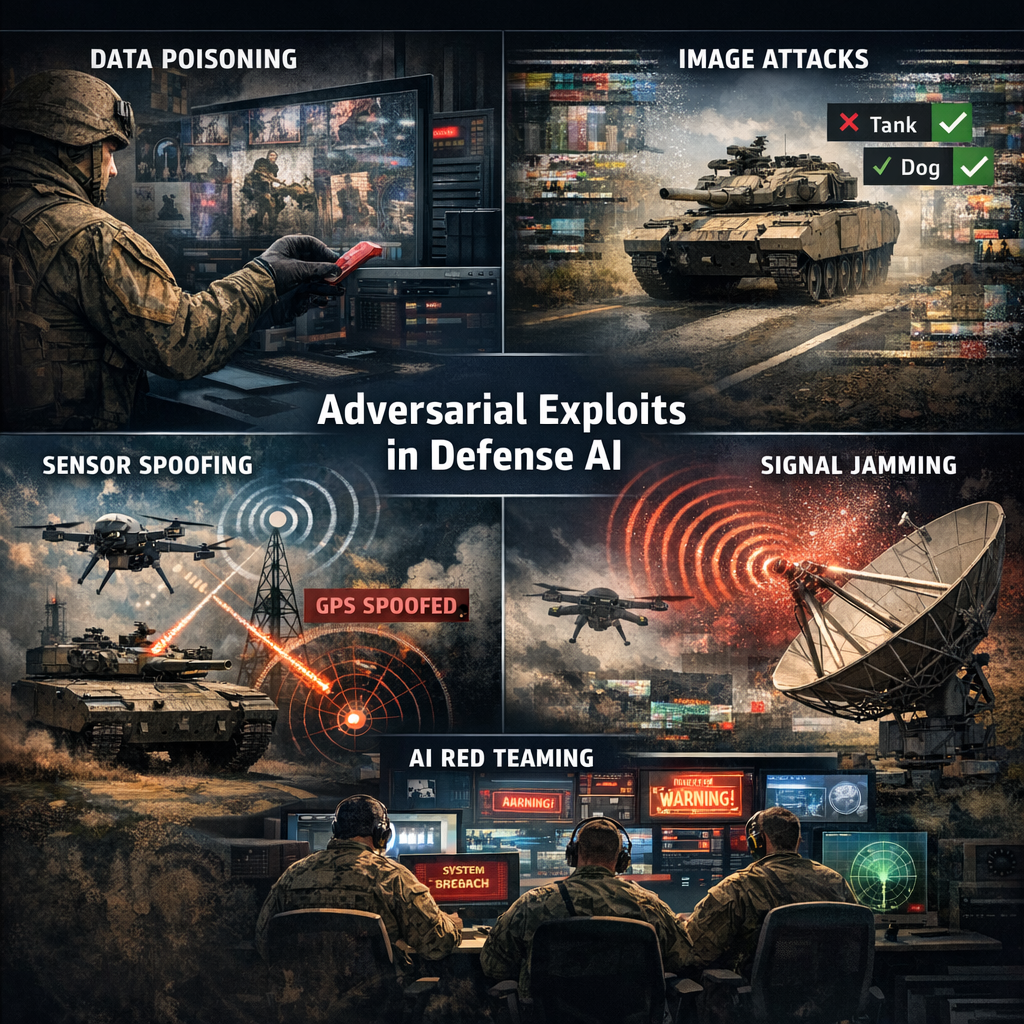

Researchers working on adversarial machine learning have already shown how small changes in images—sometimes just altered pixels—can fool computer vision models. A vehicle might be classified as something harmless. A target might not be detected at all. These demonstrations weren’t built for warfare, but they reveal a weakness that matters when AI is used for surveillance or automated monitoring.

Military AI systems also rely heavily on training data. If that data contains subtle manipulation—or even just incomplete context—the model’s judgment can shift. It may highlight the wrong signal or ignore the right one. Those kinds of failures don’t always look dramatic in testing environments. In operational systems, though, they can shape decisions.

That’s why AI red teaming in defense is gaining attention. Instead of assuming models behave correctly, specialists try to stress them, manipulate inputs, and force unexpected behavior. The goal is simple: discover weaknesses before someone else does.

For organizations deploying AI across defense infrastructure, structured military AI security testing is becoming less of an experiment and more of a requirement.

2. How AI Powers Defense Operations

Military organizations didn’t add AI just because it’s trendy. They added it because the amount of information coming into defense systems has exploded. Satellite imagery, drone footage, radar signals, intercepted communications—millions of data points every day. Humans still make the decisions, but AI helps narrow down what actually needs attention.

Autonomous Systems

Autonomous platforms are one of the most visible examples. Drones, unmanned ground vehicles, and maritime robots now rely on machine learning models to interpret sensor data while navigating complex environments. Cameras, lidar, radar—all feeding into algorithms that try to understand what the system is seeing.

The catch? If those signals are manipulated, the system’s understanding of the environment changes too. That’s why autonomous military systems security has become a serious engineering challenge.

Intelligence Analysis

AI is also used to process intelligence data that would otherwise overwhelm analysts. Models scan satellite imagery, track movement patterns, and flag unusual activity. Instead of staring at thousands of images, analysts get a shortlist of things worth examining.

Some systems now support AI threat detection military tasks by automatically highlighting suspicious behavior in surveillance feeds.

Decision Support Systems

Then there are planning tools. These systems don’t control weapons or vehicles directly—they assist commanders by modeling scenarios and predicting potential outcomes. Because those outputs can influence operational decisions, they are increasingly evaluated as part of broader defense AI risk assessment programs.

3. AI Red Teaming Use Cases in Defense

When people hear “testing,” they imagine controlled environments. Defense AI red teams do the opposite. They try to confuse systems, overload them, or feed them information that looks legitimate but subtly changes the model’s interpretation.

Sometimes the system breaks instantly. Other times the failure is quiet—nothing crashes, but the model begins making slightly worse decisions.

3.1 Autonomous Platform Confusion

Autonomous drones and ground vehicles interpret the world through sensors. Cameras, radar, navigation signals. The model merges all of that and decides what the environment looks like.

Red teams often start with the navigation layer. GPS spoofing is a classic example. Shift the coordinates slightly and the vehicle believes it’s somewhere else.

Visual manipulation is another route. Researchers have shown that small visual patterns can change how models classify objects. A vehicle may still be visible to humans but interpreted differently by the model.

Those experiments highlight a core concern around autonomous military systems security: autonomy depends entirely on what the sensors report.

3.2 Intelligence Data Manipulation

A surprising number of AI failures originate before the model even runs. The issue sits in the data.

Intelligence systems train on huge datasets—satellite imagery, radar histories, communication signals. Red teams sometimes introduce altered samples into those datasets and watch what happens.

The effects are subtle. A pattern the model previously treated as suspicious might slowly become normalized. Or the model becomes overly confident about weak signals.

These experiments usually become part of broader defense AI risk assessment programs because the vulnerability lives in the training pipeline rather than the deployed system.

3.3 Detection Models Under Pressure

Threat detection models operate in a strange environment. They’re expected to notice anomalies across massive streams of information.

Red teams try to break that assumption.

Sometimes they generate inputs designed to sit right on the decision boundary—barely suspicious but not quite enough to trigger alerts. Other tests involve flooding the model with irrelevant signals so the real activity disappears in the noise.

Failures here matter. Systems supporting AI threat detection military operations must remain reliable even when the signal environment becomes chaotic.

3.4 Decision Models That Sound Confident—But Aren’t

Not every defense AI system controls hardware. Some exist purely to help commanders think through scenarios.

But those systems have a weakness: they can produce confident outputs even when the inputs are incomplete or misleading.

Red teams sometimes alter a small piece of scenario data—troop movement estimates, logistics assumptions, environmental constraints. The model still produces a recommendation, but the reasoning behind it has shifted.

This is why AI decision support systems defense tools are increasingly treated as security-sensitive systems.

3.5 Surveillance Systems and Visual Evasion

Vision models are everywhere in defense: drones, perimeter monitoring, satellite analysis.

And yet they’re surprisingly fragile.

Researchers have demonstrated cases where small visual changes—sometimes patterns on clothing or equipment—cause models to misclassify objects. A human observer sees no difference. The model does.

That’s the kind of edge case red teams hunt for.

4. How Defense AI Fails Under Adversarial Pressure

So what actually breaks these systems?

Not dramatic crashes. Most of the time the model keeps running, giving outputs that appear normal. The trouble starts when someone intentionally feeds the system inputs it was never meant to encounter.

Take adversarial inputs. Researchers have shown that small visual modifications—sometimes just slight pixel adjustments—can change how a model interprets an image. A vehicle may still be obvious to a human observer, yet the model categorizes it differently. Surveillance and monitoring systems are especially vulnerable to this type of manipulation.

Another issue shows up earlier in the lifecycle: the data itself. Training datasets often pull information from many sources. If even a small portion of that data is altered, the model may begin learning patterns that are subtly incorrect. The system continues working, but its judgement slowly drifts.

Sensor-driven platforms face an additional challenge. Autonomous systems rely on signals from GPS, cameras, radar, and other environmental sensors. When those signals are manipulated—through spoofing or interference—the AI interprets a distorted version of reality.

That is precisely why AI red teaming in defense exists. These exercises deliberately push models into uncomfortable situations to reveal how they fail.

Without structured military AI security testing, many of these weaknesses remain invisible until systems are already deployed.

5. Why Traditional Testing Misses These Vulnerabilities

Security testing already exists across most defense systems. Networks get audited. Software is scanned for vulnerabilities. Access controls are checked and tightened.

But AI systems behave differently, and that difference creates a blind spot.

Traditional security testing usually asks a straightforward question: Can someone break into the system? With AI, the question changes slightly. The system might remain completely secure from a technical perspective—and still make the wrong decision.

That happens because the behavior of a model is shaped by data. Change the data, change the outcome. A surveillance model trained on slightly biased imagery may start interpreting patterns incorrectly. A detection system exposed to manipulated signals might quietly miss something important.

None of this necessarily triggers alarms during standard security audits. The infrastructure still works. The code still runs.

What changes is the model’s interpretation of reality.

That’s where AI red teaming in defense becomes valuable. Instead of checking whether systems are protected from intrusion, red teams test whether the AI itself can be misled.

Insights from these exercises often feed into broader defense AI risk assessment programs used to evaluate operational AI systems.

6. Implications for Defense Organizations

Security teams in defense are used to thinking in terms of infrastructure. Firewalls. Access control. Network monitoring. Those things still matter.

But AI systems behave differently.

A model can run inside a fully secured environment and still reach the wrong conclusion. Nothing has been hacked. The system is technically healthy. The problem sits in how the model interprets the data it receives.

That distinction changes how testing needs to happen.

Instead of checking only whether systems are protected, engineers increasingly examine how models behave when inputs become unreliable. Strange edge cases. Slightly distorted signals. Data that looks normal at first glance but carries hidden bias.

Some organizations now run military AI security testing during development rather than waiting until deployment. Models are retrained, evaluated again, sometimes broken on purpose just to observe how they react.

Those exercises fall under AI red teaming in defense. The idea is simple: if the model can be misled during controlled testing, the same weakness could appear during real operations.

The insights usually end up feeding broader defense AI risk assessment efforts that track whether deployed AI systems remain reliable over time.

7. Conclusion

Defense organizations are moving quickly toward AI-driven capabilities. Systems now assist with surveillance analysis, autonomous navigation, and operational planning. The speed and scale are impressive—but they introduce a different type of risk.

AI models don’t fail like traditional software. They fail quietly. A slightly manipulated signal, an unexpected scenario, or biased training data can shift how a model interprets reality.

That’s why testing methods are evolving. Instead of relying only on conventional security reviews, defense teams are beginning to examine how models behave under pressure. Programs built around AI red teaming in defense and continuous military AI security testing are becoming part of responsible deployment.

As AI becomes embedded in mission-critical systems, understanding how these models fail—and fixing those weaknesses early—will be just as important as building the systems themselves.

“Military AI doesn’t fail loudly—it fails quietly, and that’s exactly why it must be tested adversarially.”

.svg)

.png)

.svg)

.png)

.png)