AI red teaming in Ecommerce: Exposing Hidden AI Risks in Pricing, Fraud, and Automation

.png)

Key Takeaways

⮳ AI red teaming reveals how ecommerce AI fails under adversarial use.

⮳ Traditional testing misses silent AI guardrail bypass scenarios.

⮳ Pricing, fraud, refunds, and chatbots are high-risk AI systems.

⮳ AI security testing for ecommerce must be continuous, not one-time.

⮳ Red team evaluation methods expose failures before attackers do.

1. Introduction

Prices change by the minute now. Not because a manager updates a spreadsheet — but because machine learning models react to clicks, inventory shifts, competitor feeds, and demand spikes in real time. The same goes for fraud approvals and product recommendations. In a large ecommerce platform, these systems quietly decide which transactions go through, which customers see discounts, and which products surface first. Billions in revenue flows through algorithms most customers never notice.

And then attackers noticed.

Over the past two years, retailers have reported coordinated promotion abuse and pricing exploitation schemes where adversaries studied discount logic, tested edge cases, and triggered unintended price drops at scale. Fraud groups have done something similar — sending carefully crafted low-risk transactions to learn approval thresholds before launching higher-value fraud attempts. No malware. No loud breach. Just systematic probing of decision models.

That shift changes the security equation. The storefront isn’t the only target anymore — the model is.

This is why AI red teaming in ecommerce matters. It treats pricing engines and fraud systems as adversarial environments, not just optimization pipelines. Without deliberate AI security testing for ecommerce, companies assume users behave normally. Attackers don’t.

Add regulatory pressure under emerging EU AI Act ecommerce oversight, and the stakes move beyond revenue into accountability. AI systems are now financial infrastructure. And infrastructure attracts pressure.

2. How AI Powers Ecommerce

Here’s the uncomfortable truth: most ecommerce teams can explain their checkout funnel in detail. Far fewer can explain how their pricing model actually behaves when inputs get weird.

Three AI systems quietly shape revenue and risk.

Dynamic Pricing Engines

These models react to demand spikes, competitor changes, cart behavior, inventory pressure, and seasonal signals. During a flash sale, prices may adjust dozens of times without human review. That’s efficient — until someone deliberately feeds the model distorted signals. Coordinated cart activity, artificial demand bursts, or timing-based promotion triggers can expose AI pricing algorithm risks hiding inside discount logic and rule interactions.

Fraud Detection Models

Fraud AI assigns risk scores in milliseconds using device fingerprints, behavioral velocity, payment history, and anomaly detection layers. But fraud groups don’t guess. They experiment. They submit low-value transactions to see what passes. They slightly alter attributes to watch approval rates shift. That’s how subtle AI fraud detection vulnerabilities get mapped before high-value attacks are launched.

Automation & Personalization Systems

Search ranking models influence visibility. Recommendation engines amplify certain products. Chatbots authorize refunds. These systems learn from user interaction — which means manipulated interaction can alter outcomes. Feedback loops form quickly. Small distortions compound.

None of this shows up in routine QA dashboards. That’s why AI security testing for ecommerce must simulate adversarial behavior, not just validate performance metrics.

3. AI Red Teaming Use Cases in Ecommerce

Red teaming only becomes useful when it mirrors how abuse actually unfolds in the wild. Not chaotic. Not noisy. Deliberate.

In ecommerce, exploitation usually starts with observation. Then small tests. Then scaling.

3.1 Turning Demand Into a Lever

Dynamic pricing models watch behavior constantly — cart activity, sell-through rate, stock velocity. Red teams experiment with that visibility.

They create small groups of accounts that interact with the same SKU in short windows. They pause. They repeat. They adjust timing around promotional cutoffs. They compare prices across regions by shifting delivery addresses. They test how loyalty credits combine with coupons.

Often, the price moves exactly as the model is designed to move. That’s the point. When coordinated behavior reliably triggers adjustments, AI pricing algorithm risks become measurable rather than theoretical.

The weakness isn’t randomness. It’s predictability under pressure.

3.2 Finding the Edges of Fraud Models

Fraud scoring systems rely on patterns: device consistency, behavioral rhythm, historical signals. Red teams introduce tiny disturbances and watch the response.

One variable changes at a time — purchase value, session duration, shipping choice. Approvals are logged. Confidence levels are tracked. Then patterns appear.

What surfaces are not obvious gaps but narrow bands where outcomes flip with minimal variation. Those bands often reveal AI fraud detection vulnerabilities that standard accuracy testing glosses over.

Why does this work?

Because fraud models optimize for average behavior. They are less stable at the margins.

3.3 When Automation Reinforces Distortion

Recommendation engines, ranking algorithms, and conversational bots learn from interaction. If interaction is engineered, learning shifts.

Red teams simulate concentrated engagement around selected products. They cluster reviews within compressed timeframes. They craft refund-related prompts to observe decision logic. They introduce artificial stock signals.

These systems don’t “break.” They adapt — sometimes in ways that amplify the initial distortion. Small nudges compound through feedback loops.

3.4 Testing What Audits Will Eventually Test

Beyond revenue impact, there’s another layer: defensibility.

Red teams examine whether pricing behavior creates uneven outcomes, whether fraud rejections cluster in specific segments, whether automated decisions are traceable and explainable, and whether documentation aligns with obligations under EU AI Act ecommerce expectations.

This is where disciplined AI security testing for ecommerce shifts from technical assurance to governance readiness.

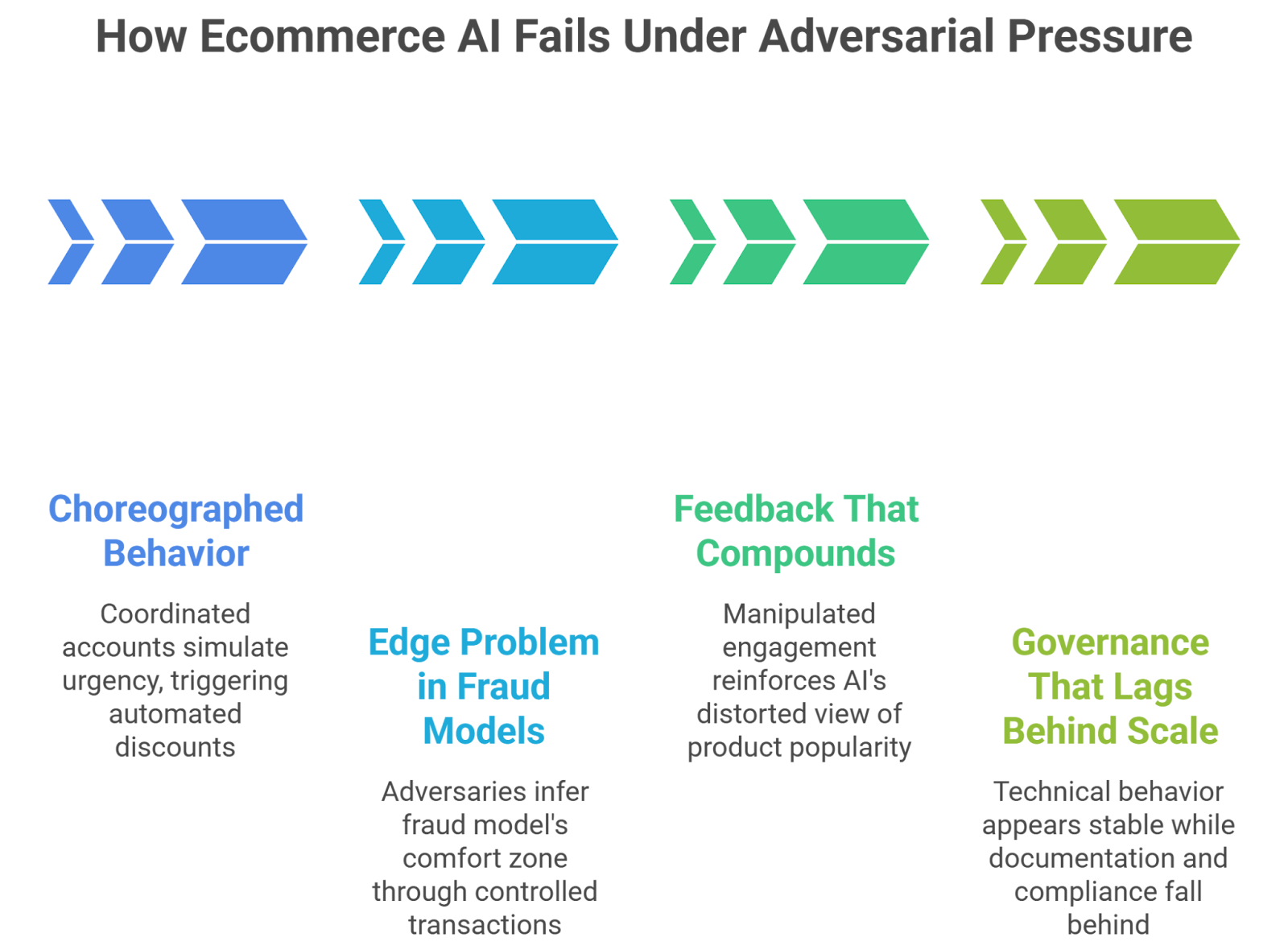

4. How Ecommerce AI Fails Under Adversarial Pressure

Here’s the thing: ecommerce AI doesn’t usually crash. It drifts. And that drift can be exploited long before anyone labels it an “incident.”

When adversaries apply pressure, failure tends to follow recognizable paths.

4.1 When Behavior Stops Being Organic

Pricing engines and ranking systems are built on behavioral signals. Add-to-cart spikes. Click-through velocity. Stock depletion rates.

Now imagine those signals are choreographed.

A handful of coordinated accounts can simulate urgency. A timed burst of purchases can trigger automated discount tiers. The system adjusts because that’s what it’s designed to do. No firewall is breached. No code is altered.

The vulnerability sits in the assumption that activity equals intent.

4.2 The Edge Problem in Fraud Models

Fraud detection models make probabilistic calls. Somewhere in that probability scale, a line exists: approve or challenge.

Adversaries look for that line.

They submit small, controlled transactions. Change one detail. Watch the response. Repeat. Over time, they don’t need to see the model — they infer its comfort zone. Many AI fraud detection vulnerabilities appear not in extreme cases but in these narrow bands where the model hesitates.

Why is this difficult to detect internally?

Because performance metrics often smooth out edge instability.

4.3 Feedback That Compounds

Automation systems reward interaction. That’s their job.

If manipulated engagement enters the ecosystem — coordinated clicks, concentrated reviews, scripted chatbot prompts — the system adapts. And then reinforces what it sees.

A product nudged upward in search gains exposure. Exposure drives engagement. Engagement feeds the model. Soon the distortion feels normal.

4.4 Governance That Lags Behind Scale

Technical behavior may appear stable while documentation, explainability, or bias monitoring falls behind.

As scrutiny increases — especially with evolving expectations around EU AI Act ecommerce compliance — gaps in traceability become liabilities. It’s not only about whether a decision was correct. It’s about whether it can be justified.

This is where disciplined AI security testing for ecommerce shifts from technical hardening to operational accountability.

5. Implications for Ecommerce Organizations

If pricing, fraud, and automation models directly influence revenue, then they deserve the same discipline as payment systems.

That starts with ownership.

First, adversarial testing can’t be an annual checkbox. Major pricing logic updates, fraud model retraining, or automation rollouts should trigger structured AI red teaming in ecommerce exercises. The goal isn’t to “break” the system. It’s to understand how it behaves when assumptions fail.

Second, testing needs to live inside delivery workflows. When models are retrained or thresholds shift, structured AI security testing for ecommerce should run alongside performance and regression checks. Models evolve quietly. So do risks.

Third, look beyond headline metrics. A fraud model with 98% accuracy can still contain unstable edges. A pricing engine that lifts margin overall may expose narrow exploitation windows. Monitoring should focus on boundary behavior, not just averages.

Fourth, documentation matters. Decision logs, model update traceability, bias checks, and governance controls need to keep pace with deployment speed. As regulatory expectations tighten — including those shaping EU AI Act ecommerce oversight — explainability becomes operational, not optional.

Finally, assign accountability clearly. AI risk should not sit between teams. Someone owns the attack surface.

Organizations that treat AI as infrastructure — not just analytics — tend to detect silent leakage before it scales.

6. Conclusion

Ecommerce AI now sits at the center of pricing decisions, fraud approvals, product visibility, and customer interaction. These systems don’t just "optimize experiences" — they control revenue flow.

Adversaries understand that. They study model behavior, test thresholds, and exploit predictability. The storefront is no longer the only target. The decision engine is.

That’s why AI red teaming in ecommerce should not be treated as a niche security exercise. It is a resilience strategy. When structured AI security testing for ecommerce becomes part of the lifecycle, pricing logic becomes harder to steer, fraud boundaries harder to map, and automation loops harder to distort.

The question isn’t whether ecommerce AI will face adversarial pressure. It already does. The real question is whether organizations are measuring how their models behave when someone pushes back.

“Ecommerce AI rarely fails by breaking, it fails by quietly doing the wrong thing at scale.”

.svg)

.png)

.svg)

.png)

.png)