AI red teaming in fintech: Securing High-Risk Financial AI Systems

.png)

Key Takeaways

➮ AI red teaming in fintech focuses on how AI systems fail, not just how they perform.

➮ Traditional testing cannot expose model manipulation attacks in real fintech environments.

➮ High-impact fintech AI systems require continuous adversarial evaluation.

➮ AI security testing in fintech must assume intentional misuse, not ideal behavior.

➮ Secure fintech AI is built through ongoing red teaming, not one-time validation.

1. Introduction

Most fintech platforms don’t “experiment” with AI anymore. They depend on it.

Transaction monitoring models flag or clear payments in real time. Credit engines calculate eligibility instantly. Automated systems react to market data without waiting for manual approval. In many firms, these models influence "revenue", risk exposure, and customer experience simultaneously.

In February 2026, sector reporting described a noticeable increase in "AI-assisted financial scams" targeting digital platforms. Fraud groups used generative tools to craft believable impersonation flows and "manipulate" onboarding, or "payment interactions"". What stood out wasn’t sophistication alone — it was subtlety. In several documented cases, user behavior did not appear obviously malicious. Device fingerprints matched prior sessions. Activity patterns stayed within acceptable thresholds. Internal fraud controls did not immediately trigger.

That detail matters.

Financial models are typically validated against historical fraud patterns and statistical performance benchmarks. They are rarely evaluated against intentionally engineered edge cases designed to sit just inside decision boundaries. An attacker does not need to break the model. They only need to understand it well enough to avoid crossing its alert line.

When that happens, exposure accumulates quietly.

Weak fintech AI security often shows up as incremental fraud leakage rather than dramatic system collapse. And that is precisely why AI red teaming in fintech is becoming a risk discipline instead of a research exercise. It tests financial AI systems under adversarial pressure — not just expected behavior.

2. How AI Powers Fintech

Inside a typical fintech company, AI shows up in places customers rarely see.

Fraud models sit between the user and the payment rail. Every swipe, transfer, or withdrawal runs through layered scoring logic. These payment fraud detection systems weigh transaction velocity, merchant history, geolocation shifts, device continuity, and dozens of other signals. A decision happens fast — approve, block, or step up authentication. It’s not theoretical risk analysis. It’s real money moving.

On the market side, AI-driven strategies monitor "price movements", liquidity patterns, and external signals. Some models incorporate structured news or sentiment inputs. Under certain conditions, that opens the door to trading algorithm manipulation. Not dramatic sabotage — just nudging signals in ways that influence automated reactions.

Then there’s generative AI.. Many fintech platforms now deploy assistants for support queries, onboarding guidance, compliance research, or internal documentation. These systems can connect to databases, surface account information, or trigger workflows. That’s where LLM security in fintech starts to matter. A prompt isn’t just text; it can become an instruction chain with access to sensitive context.

Across these environments, AI systems don’t simply analyze data. They authorize transactions, influence trades, and interact with customer information. That operational authority is exactly why fintech AI security requires adversarial scrutiny, not just performance tuning.

3. AI Red Teaming Use Cases in Fintech

When teams run adversarial exercises against financial models, the starting point is simple: assume someone is actively trying to stay invisible.

That changes how testing works.

Take fraud systems. A typical validation report checks precision, recall, false positives. A red team does something else. They open accounts slowly. They run low-value transactions. They let the model build trust. Then they begin adjusting variables — amount, merchant category, timing, device continuity. Small movements. Not enough to spike a score.

In several environments, this is where AI fraud detection vulnerabilities show up. Risk scores often plateau near decision thresholds. A model might react strongly to known fraud clusters but respond predictably when activity sits just outside them. That predictability becomes a map.

Trading environments require a different angle. Many AI strategies consume structured inputs beyond raw price data. News sentiment layers. Liquidity indicators. Order flow features. During controlled simulations, teams introduce shaped signals and observe how automated reactions cascade. This mirrors real-world trading algorithm manipulation scenarios, where influence comes from signal distortion rather than system intrusion. The interesting findings aren’t crashes — they’re sensitivity gaps.

Generative systems introduce another layer. In fintech environments, assistants are rarely just chatbots. They may reference policy databases, "retrieve internal documents", or interface with customer workflows.. Adversarial testers explore how context can be bent — ambiguous prompts, indirect instructions, chained queries. Weaknesses in LLM security in fintech often appear as quiet boundary expansion. A model retrieving slightly more than intended. Or inferring authority it was never explicitly granted.

There’s also monitoring. Many risk dashboards assume change happens gradually. Red teams simulate abrupt distribution shifts, compressed edge cases, or coordinated low-noise fraud bursts. In some tests, alerting logic lags while surface metrics look stable. Exposure builds without dramatic signals.

And then there’s integration risk. Financial AI rarely operates alone. Third-party scoring APIs, identity verification vendors, external data feeds — each introduces assumptions. Adversarial testing sometimes reveals that dependencies behave differently under stress, affecting downstream models in ways internal validation never covered.

All of this is why AI red teaming in fintech is not a branding exercise. It is a structured attempt to see how systems behave when someone is trying, deliberately, to make them misbehave. Durable fintech AI security depends on that perspective.

4. How Fintech AI Fails Under Adversarial Pressure

Financial AI systems don’t usually “break.” They behave exactly as they were trained to behave — and that’s the problem.

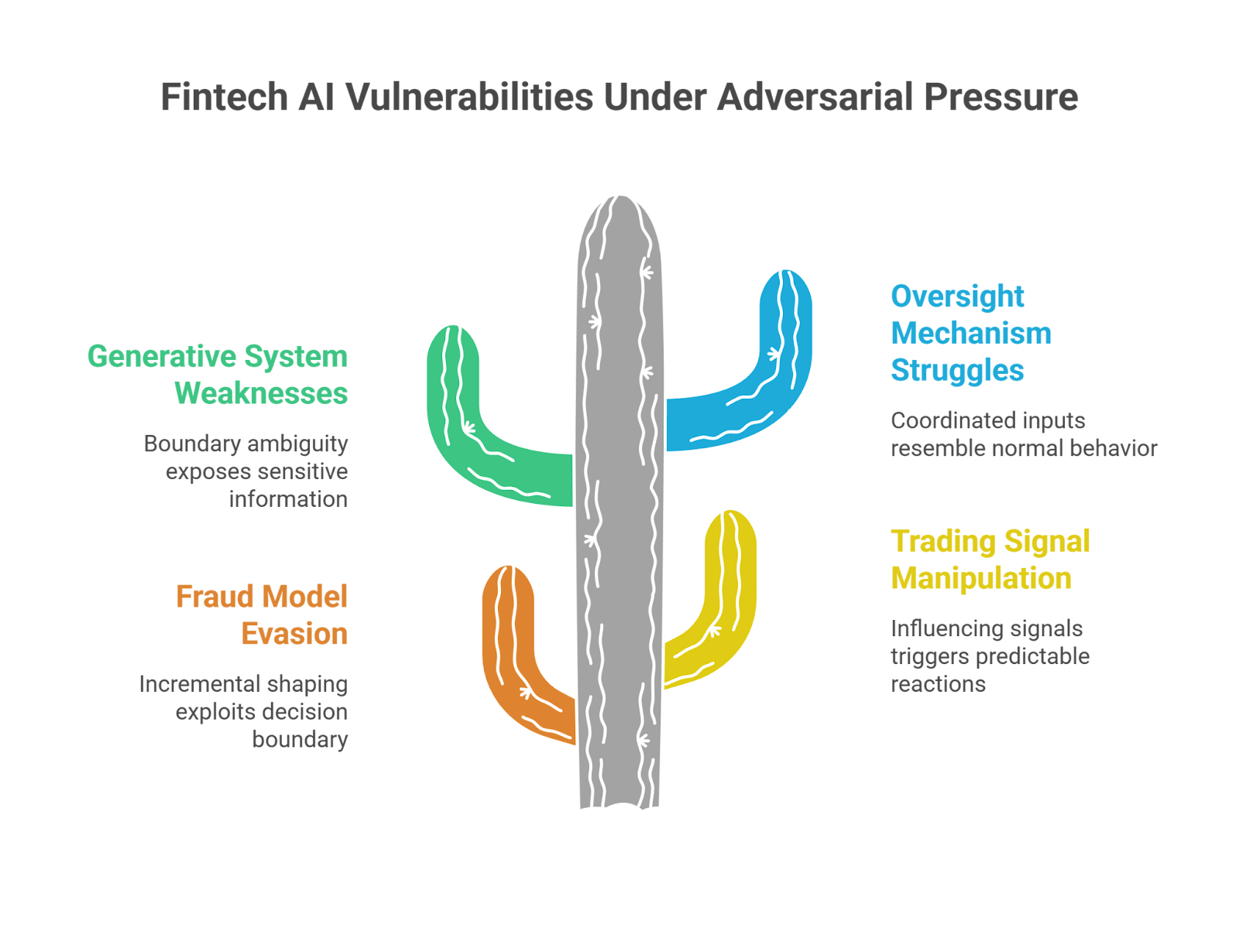

Consider fraud models. When teams analyze real-world evasion attempts, the pattern is rarely brute force. It’s incremental shaping. Transaction values move slightly. Timing shifts. Merchant categories rotate. If the scoring curve flattens near a decision boundary, that boundary becomes usable. The model isn’t malfunctioning. It’s responding consistently — and that consistency becomes exploitable.

Trading environments show a different dynamic. Automated strategies respond to structured inputs. If a model consumes external signals — volatility feeds, correlated asset patterns, structured news — those signals don’t need to be corrupted entirely. They only need to be influenced enough to trigger predictable reactions. This is how trading algorithm manipulation scenarios emerge: by steering what the model believes rather than attacking infrastructure directly.

Generative systems introduce yet another layer. In fintech deployments, assistants often sit close to sensitive workflows. They may "retrieve" account information, summarize internal policies, or interact with compliance tools. When red teams probe these environments, failures are often "gradual".

A response that exposes slightly more context than intended.

A tool invocation that wasn’t explicitly restricted. Weaknesses in LLM security in fintech tend to surface as boundary ambiguity rather than dramatic override.

Oversight mechanisms also struggle under pressure. Monitoring systems are frequently tuned to detect statistical drift over time. Coordinated adversarial inputs don’t always resemble drift. They resemble normal behavior — just strategically aligned.

None of these patterns look catastrophic in isolation. But in financial systems, small predictable behaviors can scale quickly. That scaling effect is where exposure compounds.

5. Why Traditional Testing Misses These Risks

Financial institutions don’t ignore testing. In fact, they test extensively. Models are backtested. Risk teams review documentation. Compliance signs off. Everything looks controlled on paper.

The blind spot appears in the assumptions.

Most validation exercises rely on historical data. That makes sense — it’s measurable, labeled, auditable. But historical datasets reflect what has already happened. They don’t represent someone actively trying to reverse-engineer decision logic. A fraud model trained on known attack patterns may perform well against variations of those patterns. It may struggle with behaviors designed specifically to avoid them.

Infrastructure testing has similar limits. Penetration tests focus on exposed services, authentication flows, misconfigurations. They rarely examine how a scoring function behaves if an adversary adjusts inputs gradually over time. Exploiting a model doesn’t require breaching a firewall. It requires understanding how the model reacts.

Even model risk governance frameworks emphasize statistical stability — calibration curves, performance metrics, drift monitoring. These are necessary controls. But they assume organic change, not strategic manipulation.

That’s the gap.

Traditional testing evaluates whether a system works. It does not fully explore how the system behaves when someone is intentionally trying to make it misbehave. Without adversarial evaluation, weaknesses remain theoretical — until they aren’t.

This is why AI red teaming in fintech complements existing controls. It does not replace governance; it stress-tests it. And without that stress, assessments of fintech AI security can appear stronger than they actually are.

6. Conclusion

Financial AI now sits in positions of authority. It approves transactions, blocks accounts, influences trades, and retrieves sensitive information. When those systems behave predictably under pressure, predictability becomes exploitable.

The rise in AI-assisted scams and signal manipulation attempts is not an anomaly. It reflects a shift in attacker capability. Adversaries are studying models the same way institutions study performance metrics.

That shift changes the testing requirement.

Performance validation answers whether a system works. Governance frameworks document how it is controlled. Neither fully answers how it behaves when inputs are deliberately shaped to bypass safeguards.

This is where AI red teaming in fintech becomes foundational rather than experimental. It introduces hostile intent into evaluation cycles and reveals structural weaknesses that routine “validation” overlooks. Without that lens, even mature programs may misjudge the resilience of their models.

Durable fintech AI security depends on treating financial AI as an active attack surface. In environments where algorithms move money and influence markets, resilience cannot be assumed. It has to be tested under pressure.

“Fintech AI doesn’t fail all at once, it fails quietly, transaction by transaction.”

.svg)

.png)

.svg)

.png)

.png)