AI red teaming in gaming & Esports : Securing Anti-Cheat, Moderation, and Player AI

.png)

Key Takeaways

AI anti-cheat systems can be systematically bypassed when attackers understand model behavior.

AI moderation in gaming is vulnerable to coordinated reporting and contextual manipulation.

Esports AI failures are public, irreversible, and damaging to competitive integrity.

AI hallucinations create confident but incorrect enforcement decisions at scale.

Continuous AI red teaming is the only way to defend against evolving player-driven abuse.

1. Introduction

Modern multiplayer games quietly rely on AI systems to keep everything running smoothly. Anti-cheat engines watch for “strange player behavior," moderation models scan chat and “voice channels," and “matchmaking algorithms” constantly reshuffle players to keep matches fair. In large online games, these systems process millions of events every hour. A sudden spike in accuracy, an unusual movement pattern, or a wave of abusive messages can trigger automated decisions within seconds.

And here’s the uncomfortable part: once these systems become the referees of competitive play, they also become targets.

Over the past few years, cheat developers have shifted tactics. Instead of obvious hacks that are easy to detect, many tools now attempt to behave like real players. Some automation scripts deliberately miss shots, introduce random delays, and simulate human-like mouse movement. The idea is simple—stay just unpredictable enough that detection models treat the behavior as legitimate gameplay.

That approach has worked more often than game studios would like to admit. In several competitive titles, suspicious accounts slipped through behavioral anti-cheat systems for extended periods before developers caught the pattern. Players noticed something was off long before automated defenses reacted.

These incidents highlight a broader challenge in AI security in gaming. AI systems make critical decisions—who gets banned, whose report is accepted, and sometimes who qualifies for ranked competition. When attackers learn how those models behave, they can start experimenting with ways to avoid them.

This is why AI red teaming in gaming is gaining attention among game security teams. Instead of assuming defensive models will work under real pressure, adversarial testing deliberately stresses them—probing for weaknesses before exploit developers find them.

For developers, platform operators, and tournament organizers, the message is becoming clear: if AI systems enforce fairness, they also need to be tested like any other high-value security system.

2. How AI Actually Runs Modern Online Games

A lot of players assume game security is handled by simple software checks. That was mostly true years ago. Today, things look different. Large multiplayer games rely on AI systems that constantly watch what players do—every movement, every match result, and every report sent by the community.

Let’s start with anti-cheat systems. Earlier anti-cheat tools mostly searched for known cheat programs running on a player’s device. Modern systems go further. They examine gameplay itself. Things like crosshair movement, reaction timing, shooting accuracy, and how consistently someone performs across multiple matches all become signals the model evaluates.

If the behavior starts looking statistically strange, the system raises a flag.

The problem? Behavior models can sometimes be studied. Cheat developers often test their tools against detection systems until they find patterns that pass unnoticed. That’s where anti-cheat AI vulnerabilities begin to appear. A cheat tool doesn’t need perfect accuracy; it just needs to look believable enough.

Another major AI layer appears in moderation. Competitive games generate massive volumes of chat and voice communication every minute. Human moderators alone couldn’t keep up with it. Automated models help identify harassment, threats, or abusive language so that reports can be reviewed faster. But language inside gaming communities is messy. Slang changes quickly. Sarcasm, inside jokes, or coded insults can confuse automated filters.

Matchmaking systems add another layer of AI decision-making. They examine performance history, ranking movement, and player behavior signals to determine who should face whom in competitive matches. A small change in the model’s assumptions can shift how players move through ranked ladders.

Because these systems influence bans, rankings, and competitive fairness, security teams increasingly examine them through AI red teaming esports exercises. The goal is simple: understand how someone might manipulate the system before it happens in the wild.

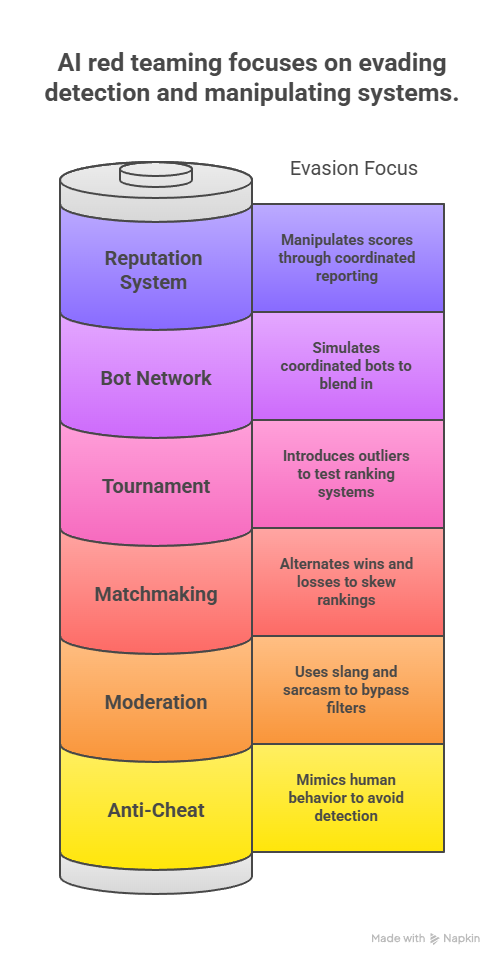

3. AI Red Teaming Use Cases in Gaming and Esports

Ask anyone who has worked on a large online game, and they’ll tell you the same thing: players test the system constantly. Not just the gameplay mechanics. The protections, too. If there’s a detection model somewhere in the pipeline, someone out there is trying to figure out how it behaves.

That reality is the reason security teams run adversarial exercises. Instead of assuming defensive systems will work perfectly, engineers try to poke holes in them on purpose. It’s a bit like stress-testing a bridge before opening it to traffic—better to see what breaks early.

Here are a few situations where teams usually focus their efforts.

3.1 Anti-Cheat Behavior Evasion

Modern anti-cheat systems often rely on behavior patterns. They look at reaction times, aiming movement, accuracy over time, and other gameplay signals. When those patterns look unusual, then the system raises a flag.

So during testing, engineers sometimes flip the problem around. They build controlled tools that behave almost like a human player.

Typical experiments include things like aim assistance that occasionally misses on purpose, automated actions with tiny random delays, or movement that imitates normal player hesitation. The idea is to see whether the detection model can still tell the difference.

Tests like these sometimes reveal anti-cheat AI vulnerabilities where automated play blends in surprisingly well with legitimate gameplay.

3.2 Moderation Filter Evasion

“Chat moderation” is another area where automated systems do a lot of heavy lifting. In large multiplayer games, thousands of messages can appear every minute. Human moderators alone simply can’t keep up.

But players are creative. Language in gaming communities changes constantly—new slang, memes, sarcastic phrases, and coded insults appear all the time.

During red team exercises, security teams experiment with those edge cases. They might test phrases with unusual spelling, comments where tone changes meaning, or community slang used in unexpected ways.

Sometimes, a phrase that looks harmless to a moderation model is instantly understood by players as hostile.

3.3 Matchmaking Manipulation

Matchmaking models estimate skill levels based on previous performance. On paper, the goal is balanced matches. In practice, ranking systems can behave in strange ways when the input data changes suddenly.

Testing often involves simulated players who behave inconsistently—performing extremely well in one match and poorly in the next, or deliberately alternating wins as well as losses.

These scenarios help reveal how sensitive the ranking system is to unusual data patterns.

3.4 Tournament Qualification Testing

Online esports events frequently rely on automated rankings to determine who qualifies for competitions. That makes ranking systems part of the competitive infrastructure.

Security teams sometimes test what happens when unusual performance data enters those systems—unexpected win streaks, statistical outliers, or activity patterns that don’t match normal player behavior.

The purpose is to understand whether esports platform security AI can detect signals that look suspicious but still technically fall within normal ranges.

3.5 Bot Network Simulation

Bots are nothing new in online games, but large groups of them can behave in surprisingly subtle ways. A single automated account is easy to catch. Fifty coordinated ones are harder.

Testing may involve simulated bot groups repeating gameplay loops, farming resources, or interacting with in-game marketplaces.

The interesting question isn’t whether bots exist. It’s whether they can look enough like real players to blend into the crowd.

3.6 Reputation System Experiments

Some games track player reputation or sportsmanship. Those scores might affect matchmaking, report systems, or moderation priority.

Security teams sometimes test whether those scores can be manipulated—for example, through coordinated reporting behavior or unusual engagement patterns.

Even small changes in input signals can gradually influence how these systems evaluate players.

4. How Gaming AI Fails Under Real Adversarial Pressure

People often assume cheating tools in games are obvious. Perfect aim. Instant reactions. Movement that looks robotic. Those things do get caught quickly. Most modern detection systems are built exactly for that kind of behavior.

But attackers rarely stay that obvious for long.

Cheat developers learned that subtlety works better. Instead of writing tools that perform perfectly, they design them to look imperfect. A script might intentionally miss a few shots. Another might add tiny delays before firing. Movement paths can include small corrections so they resemble normal mouse movement.

From the system’s perspective, the numbers suddenly look normal enough. That’s where anti-cheat AI vulnerabilities begin to appear. The model sees data that fits expected ranges, even though the player is still gaining an advantage.

Moderation systems face a different problem. Language inside gaming communities moves fast. A phrase that looks harmless to a machine might be understood instantly by players as an insult. Sarcasm, memes, and slang change meaning depending on context. Automated filters often struggle with that.

Some experiments in AI security in gaming have shown that even small wording changes can bypass moderation models that rely heavily on keyword patterns.

Ranking systems introduce yet another weak point. Matchmaking algorithms depend on player statistics to estimate skill levels. If groups of players deliberately manipulate those signals—alternating wins, throwing matches, or coordinating unusual play styles—the system may start making incorrect predictions.

These situations are not always the result of advanced hacking. Quite often they begin with simple curiosity. Players try something, see how the system reacts, and adjust. Over time those observations spread through communities and eventually turn into reliable ways to avoid detection.

Understanding these patterns is exactly why security teams are beginning to run adversarial testing exercises around AI red teaming in gaming environments.

5. Conclusion

Spend enough time in online multiplayer games and you’ll notice something: players care a lot about fairness. Losing a match isn’t the problem. Feeling like the system itself is broken—that’s when people get frustrated.

Running modern online games is complicated. Thousands of matches happen at the same time, and every one of them produces huge amounts of gameplay data. Because of this scale, developers rely on automated systems to help monitor gameplay patterns, review player reports, and keep competitive rankings organized.

This is why discussions around AI security in gaming have become more common in recent years. When automated systems influence bans, rankings, or player moderation, they become an important part of the platform’s overall security.

Competitive gaming brings an additional layer of responsibility. Many tournaments and ranked ladders depend on esports platform security AI to help maintain fair competition across large groups of players. If those systems behave incorrectly, the credibility of the competition itself can be questioned.

For this reason, some developers have started exploring AI red teaming in gaming as a way to examine how these systems behave when unusual gameplay patterns or unexpected player behavior appear. Testing systems from that perspective can help teams identify weaknesses earlier and improve the systems that support fair play.

“When players learn how your AI thinks, fairness becomes a security problem.”

.svg)

.png)

.svg)

.png)

.png)