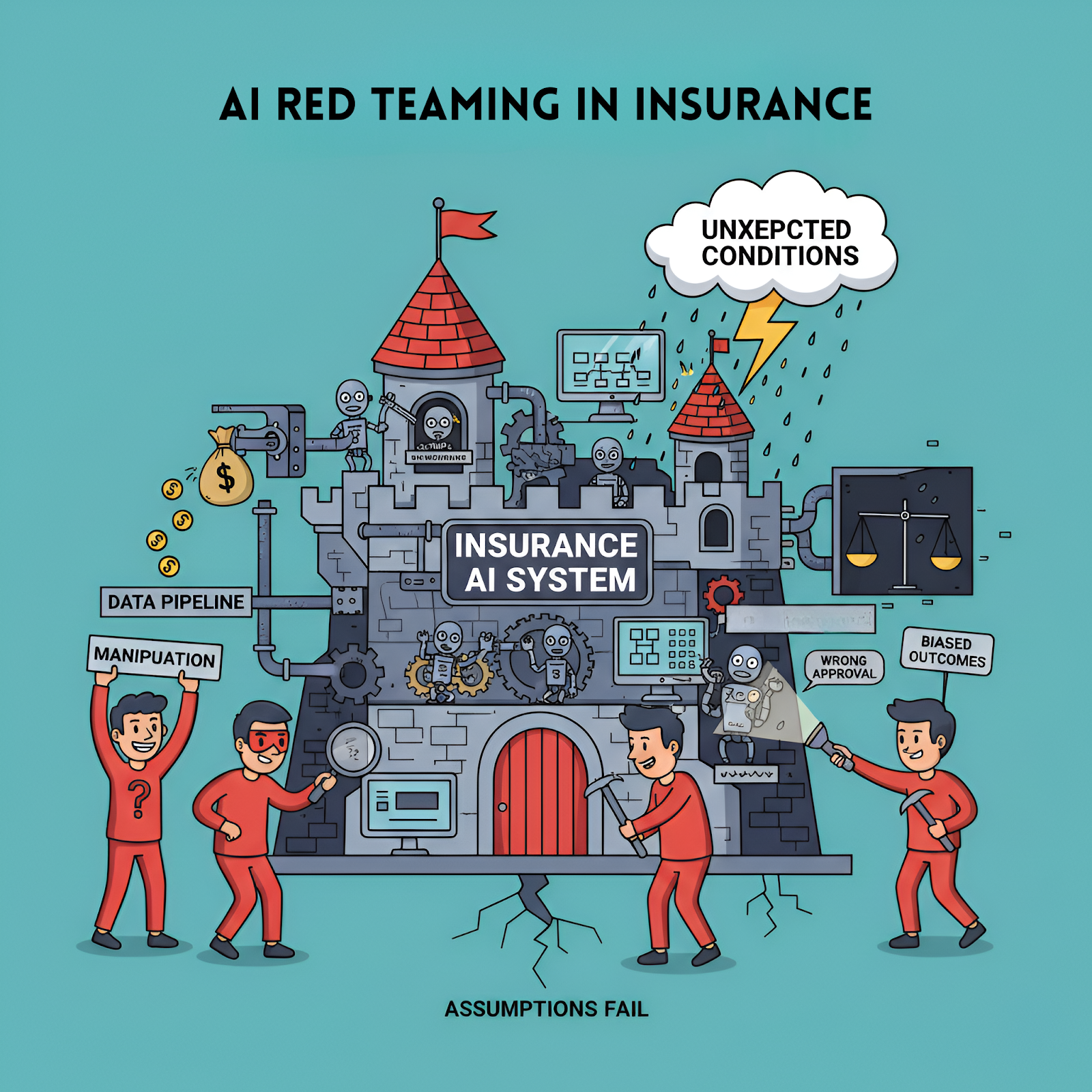

AI red teaming in insurance : Testing Underwriting, Claims, and Risk Models

.png)

Key Takeaways

AI red teaming focuses on how insurance AI systems fail, not just how they perform.

Traditional validation cannot detect real-world model manipulation in insurance AI.

High-impact insurance AI systems must be tested under adversarial and misuse scenarios.

Continuous AI security testing in insurance helps detect silent failures before they scale.

Strong insurance AI security is built through evidence-based testing, not assumptions of safety.

1. Introduction

Insurance companies didn’t suddenly decide to “adopt AI.” It happened gradually. First came automated underwriting tools, then fraud detection models, and eventually machine learning systems that estimate risk across entire portfolios.

Today many insurers depend on these models every day. An underwriting model may decide whether someone qualifies for coverage. A claims system might flag suspicious activity before a human investigator even looks at the case. Risk models run quietly in the background, estimating potential losses from everything—car accidents to natural disasters.

Most of the time, these systems work well. But they don’t always behave the way developers expect.

A slightly unusual claim description might confuse a fraud detection model. A rare customer profile could push an underwriting system into territory it never saw during training. And if someone intentionally tries to manipulate the inputs, the results can become unpredictable.

That’s where Insurance AI security starts to matter.

Instead of assuming models behave correctly, organizations are beginning to test them the way attackers would. This practice—known as AI red teaming in insurance—puts models under pressure. Red teams try unusual inputs, edge cases, and adversarial scenarios to see how "underwriting" systems, claims models, and risk predictions react.

Sometimes the findings are surprising. Small changes in data can shift model decisions more than expected. Discovering those weaknesses early is far better than learning about them after a costly mistake or a regulatory "investigation".

2. How AI Actually Shapes Insurance Decisions

If you ask someone in insurance where AI is used, the answer usually starts with underwriting. But that’s only part of the picture. In practice, machine learning shows up in several places across the insurance workflow — sometimes quietly.

Take underwriting first.

Underwriting Systems

When someone applies for a policy, the model evaluates dozens of variables at once. Driving history, medical indicators, claim records, even patterns across similar applicants. The goal is simple: estimate the probability of future loss.

Most applications pass through without any drama. But unusual cases can confuse the model. A rare combination of attributes might push the system outside the patterns it learned during training. In testing, these situations sometimes expose AI model bias insurance systems accidentally learn from historical data.

Claims systems behave differently.

Claims Review and Fraud Detection

Here the models read claim descriptions, check supporting documents, and compare them with past cases. Fraud detection algorithms are trained to notice patterns investigators have seen before.

The weakness? Fraud rarely repeats itself in the same way. Slight changes in wording or document structure can sometimes slip past automated checks. During security testing, researchers often uncover AI claims fraud detection vulnerabilities where manipulated claims still appear "legitimate" to the model.

Risk modeling adds another layer.

Some insurers run predictive systems that estimate long-term exposure across thousands or millions of policies. These models influence pricing "strategies" and reserve planning. If their predictions drift or react badly to unusual data, the financial impact can spread far beyond a single policy.

That’s exactly why these systems eventually become targets for adversarial testing.

3. Where Red Teams Put Insurance AI Under Stress

When people hear about security testing, they usually imagine someone trying to hack a system. Red teaming AI looks different. The model itself becomes the target.

Underwriting models are often the first place testers look.

Instead of submitting clearly fake applications, red teams create profiles that are almost normal. A driving record that looks typical but includes a rare pattern. Medical history that resembles thousands of past applicants but contains a small anomaly. These combinations are interesting because models sometimes react unpredictably when inputs sit just outside familiar training data.

In a few experiments researchers have run, those edge cases exposed traces of AI model bias insurance systems had quietly learned from historical datasets. Not obvious bias — the subtle kind that only appears when the model encounters unusual demographic combinations.

Claims models behave differently, and testers approach them differently too.

Fraud detection systems usually depend on patterns in text, documents, and past behavior. So the red team plays with those patterns. A claim description might be rewritten in three slightly different ways. Supporting documents might contain small inconsistencies that look harmless to a human reviewer.

Surprisingly, that can be enough. During testing, investigators sometimes uncover AI claims fraud detection vulnerabilities where a manipulated claim still passes automated checks because the wording falls outside the patterns the model expects.

Another weak point has appeared more recently: AI assistants used inside insurance workflows.

Some underwriting or claims teams now rely on AI tools to summarize files or answer internal questions. If those tools process external documents or customer messages, they can occasionally be influenced by hidden instructions embedded in the text. Security researchers have demonstrated prompt-style manipulation attacks in several enterprise AI systems.

Risk models are the last piece red teams tend to examine. These systems forecast losses across huge portfolios. Most of the time their predictions look stable — until someone feeds them unusual distributions of data or rare catastrophe scenarios.

That’s usually when interesting behavior starts to show up.

4. How Insurance AI Systems Actually Break

Most AI failures in insurance aren’t dramatic security breaches. They look ordinary at first.

A policy application goes through underwriting. The model reviews dozens of attributes — driving history, claim frequency, location patterns. Nothing unusual. But then a strange combination appears. Maybe the applicant fits several categories the model rarely sees together. Suddenly the risk score jumps in a way that doesn’t make much sense.

Red teams see this kind of thing during testing more often than people expect. It’s not always malicious input either. Sometimes the model simply reacts badly when it leaves familiar territory.

Claims systems reveal different behavior.

Fraud detection models are trained on historical patterns. Investigators label past fraud cases, the system learns those signals, and automation begins. The problem is that fraud evolves. Change the wording of a claim slightly. Adjust the timing between events. Introduce documents that look legitimate but carry subtle inconsistencies.

Those small variations can expose AI claims fraud detection vulnerabilities where the model treats a manipulated claim as routine.

Then there are risk models.

These systems predict exposure across thousands or millions of policies. Under normal conditions they behave well. But introduce unusual distributions — synthetic catastrophe data, unexpected correlations between variables — and predictions can shift quickly.

That’s the kind of instability adversarial testers look for during AI red teaming in insurance exercises. Not catastrophic failure. Just the small cracks that appear when a model is pushed outside its comfort zone.

Those cracks matter. In insurance, even minor prediction errors can ripple across underwriting decisions, fraud investigations, and long-term risk planning.

5. What Insurers Usually Discover After Testing Their Models

When insurers begin testing their AI systems seriously, the first surprise is often how sensitive some models are to unusual inputs.

Underwriting models are a good example. During adversarial testing, teams sometimes create applicant profiles that look perfectly legitimate but contain uncommon combinations of attributes. Nothing obviously wrong. Yet the model suddenly produces a very different risk score. That kind of behavior usually signals that the model learned patterns too closely tied to historical data — something frequently associated with AI model bias insurance systems inherit from past decisions.

Claims systems tend to reveal different problems.

Fraud detection models are trained on patterns investigators have already seen. The difficulty is that fraud rarely repeats itself exactly the same way. A slightly rewritten claim description, a modified timeline, or a document formatted differently may be enough to slip past automated screening. Security teams testing these systems occasionally uncover AI claims fraud detection vulnerabilities where manipulated claims still appear normal to the model.

The interesting part is that none of these issues typically appear during standard QA testing.

That’s why organizations are starting to adopt AI red teaming in insurance programs. Instead of asking whether the system works, the testing team asks a different question: What happens when someone intentionally tries to confuse the model?

The answers can be uncomfortable — but they are far easier to deal with during testing than after deployment.

6. Conclusion

Insurance has always relied on data to evaluate risk. What has changed is the speed and scale at which those decisions now happen. Machine learning systems review applications, flag suspicious claims, and estimate financial exposure faster than any manual process could.

That efficiency comes with a trade-off. Models learn patterns from historical data, and those patterns do not always hold when new or unusual inputs appear. Sometimes the issue is simple prediction instability. Other times it reveals deeper concerns related to Insurance AI security, fairness, or model reliability.

Testing these systems only under normal conditions rarely exposes those weaknesses. Adversarial scenarios tend to reveal much more. That is why AI red teaming in insurance is becoming an important practice for organizations deploying AI across underwriting, claims, and risk modeling.

For insurers, the goal is not to eliminate every possible model failure. That would be unrealistic. The real objective is earlier visibility — discovering fragile behavior during controlled testing rather than after a model has already influenced thousands of real policy decisions.

“Insurance AI doesn’t fail all at once, it fails quietly, decision by decision, until the impact is unavoidable.”

.svg)

.png)

.svg)

.png)

.png)