AI red teaming in marketing & AdTech: Preventing Manipulation and Spend Abuse

.png)

Key Takeaways

AI-driven advertising systems can be misled by manipulated engagement signals without triggering alerts.

Click fraud and synthetic behavior can quietly influence targeting, bidding, and optimization models.

Campaign optimization engines can amplify small fraudulent signals into large-scale budget shifts.

Attribution systems are vulnerable to hijacking, leading to misallocated conversions and spend.

AI red teaming in marketing helps uncover hidden weaknesses before they turn into costly failures.

1. Introduction

Advertising budgets don’t disappear all at once. They leak.

A campaign suddenly starts getting more clicks than usual. Performance dashboards look healthy. The bidding system increases spending because engagement appears strong. No alarms go off.

Then someone notices something strange—conversions aren’t lining up with revenue.

This pattern shows up more often than most marketing teams admit. Automated campaign systems rely on behavioral signals: clicks, impressions, session length, and conversions. When those signals look positive, algorithms reward them with more budget. That’s how optimization models work.

But those signals can be fabricated.

In one of the most widely documented ad fraud operations, researchers exposed Methbot, a bot network designed to impersonate real viewers watching video ads. The bots didn’t just load pages. They simulated full browsing sessions—scrolling, moving cursors, and loading video players. To advertising platforms, the traffic looked authentic, so premium ads were served to it continuously. Estimates suggested the operation generated millions of dollars in fraudulent ad revenue before investigators shut it down.

The incident exposed something uncomfortable about automated advertising systems: they trust the data pipeline. If attackers manipulate engagement signals, the algorithms follow those signals.

That’s why AI security in AdTech is no longer just a fraud-detection problem. It’s a model integrity problem.

Security teams have started addressing this through AI red teaming in marketing—intentionally simulating adversarial behavior to see how targeting models, bidding systems, and optimization engines react. Without that testing, unnoticed marketing AI vulnerabilities can quietly enable sustained ad spend manipulation across entire campaigns.

And the worst part?

The dashboards still look fine.

2. How AI Powers Marketing and AdTech

If you look inside most modern advertising platforms, the majority of decisions are made by algorithms rather than people. Campaign managers still define goals and budgets, but the daily adjustments—bids, audiences, placements—are largely automated. That automation is exactly why AI security in AdTech matters so much.

Three types of AI systems drive most digital advertising infrastructure.

AI Targeting Systems

Audience targeting models analyze huge volumes of behavioral data: browsing activity, device patterns, purchase history, and location signals. Their job is simple in theory—predict which users are most likely to click or convert.

In practice, these models constantly update themselves based on engagement data. If attackers manage to inject manipulated behavior signals, the targeting engine may begin prioritizing low-quality or fraudulent traffic sources.

This is one area where marketing AI vulnerabilities often appear.

Programmatic Bidding Algorithms

Programmatic advertising runs on real-time bidding. When a user loads a page, an automated auction takes place in milliseconds. Bidding models decide how much an advertiser should pay for that impression based on predicted value.

The system works fast—incredibly fast. But it also depends heavily on signals about user quality and inventory legitimacy. If those signals are manipulated, bidding systems can unknowingly overpay for impressions.

Campaign Optimization AI

The third layer sits above everything: optimization engines. These systems monitor campaign performance and automatically reallocate budget toward what appears to work best.

Clicks increase? The budget shifts upward.

Conversions rise? The algorithm doubles down.

When fraudulent engagement signals slip through detection systems, optimization models amplify the problem. This is exactly why strong AI ad fraud detection and click fraud AI detection are necessary—otherwise, the AI starts optimizing for manipulated data.

3. AI Red Teaming Use Cases in Marketing & AdTech

When teams start running AI red teaming in marketing systems, the first surprise is usually how easy it is to influence campaign behavior. Most advertising models are designed to react quickly to engagement signals. That’s good for optimization. It’s not great when those signals are manipulated.

Different parts of the advertising stack fail in different ways.

3.1 Audience Signal Manipulation

Targeting models constantly watch engagement patterns—clicks, session time, and browsing activity. If those signals change, the model adjusts.

Red teams sometimes simulate this with synthetic traffic. Not huge volumes. Just enough coordinated activity to see how the model reacts.

Typical experiments include:

⮞ Injecting behavioral signals from fake user profiles

⮞ Generating engagement bursts around specific audience segments

⮞ Introducing coordinated browsing patterns across devices

Sometimes the result is subtle. Targeting models slowly start favoring traffic sources that appear highly engaged but are actually synthetic. That’s one of the more common marketing AI vulnerabilities.

3.2 Manipulating Programmatic Auctions

Programmatic advertising operates on automated auctions. The bidding model estimates how valuable an impression might be and submits a price in real time.

Red teams test what happens when those value signals are distorted.

For example:

⮞ Fake premium inventory appearing in exchanges

⮞ Artificial competition signals increasing auction pressure

⮞ Misleading metadata about audience quality

The bidding system reacts exactly the way it was designed to—by raising bids. Under the right conditions, this can produce measurable ad spend manipulation within hours.

3.3 Stress-Testing Fraud Detection Models

Fraud detection models try to identify abnormal traffic patterns. But sophisticated bot networks rarely behave like obvious bots anymore.

A red team might simulate traffic that:

⮞ scrolls pages

⮞ pauses between clicks

⮞ watches partial video ads

⮞ moves across residential IP ranges

The goal isn’t to flood the system. It’s to see what slips through. Weak detection models often miss coordinated activity like this, which is why advanced click fraud AI detection methods rely on deeper behavioral analysis.

3.4 Optimization Feedback Loop Attacks

Campaign optimization engines can create unexpected feedback loops.

Here’s a simple scenario red team test:

⮞ Inject small numbers of fake conversions

⮞ Allow the optimization system to detect the “high-performing” traffic source

⮞ Watch budget automatically shift toward it

No direct attack on the bidding system is required. The algorithm amplifies the signal on its own.

That’s a classic exploitation path in automated advertising systems.

3.5 Attribution Model Exploits

Attribution systems decide which interaction receives credit for a conversion. Fraudsters don’t always need to generate conversions—sometimes they just intercept the attribution signal.

Red team simulations often involve:

⮞ cookie stuffing

⮞ last-click hijacking

⮞ cross-device identity spoofing

When these attacks succeed, legitimate conversions are credited to fraudulent traffic sources. Optimization systems then reinforce the mistake.

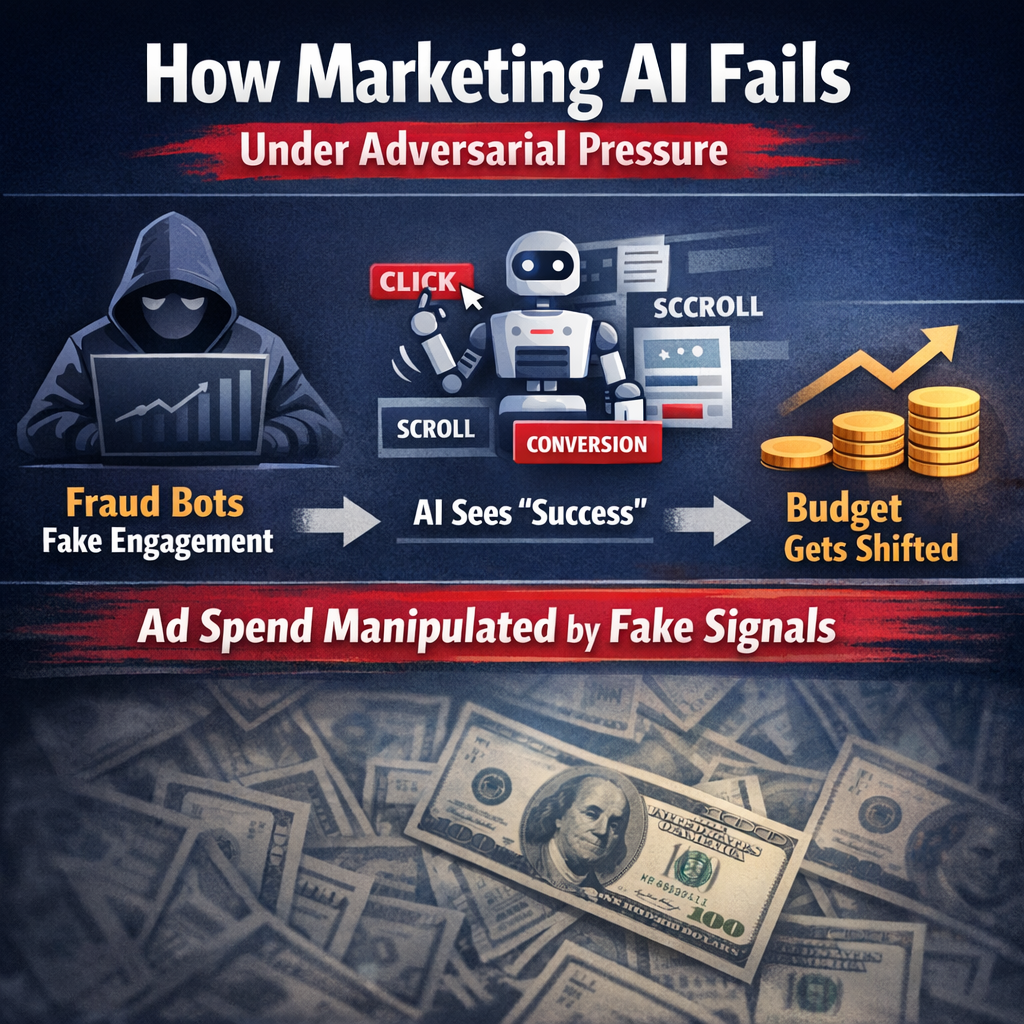

4. How Marketing AI Fails Under Adversarial Pressure

Something strange happens in a lot of automated ad campaigns.

The metrics improve.

Clicks go up. Engagement increases. The optimization system decides the campaign is performing well and shifts more budget toward the traffic source responsible. From the dashboard’s perspective, the algorithm is doing exactly what it should.

But sometimes those signals aren’t real.

Fraud operations figured this out years ago: advertising systems trust behavioral signals. If a traffic source appears active — scrolling pages, pausing on content, occasionally clicking — the platform treats it as legitimate engagement. Some bot networks are designed specifically to generate that type of activity. They don’t hammer ads with obvious automation. They behave… almost normally.

That’s enough to slip past many AI ad fraud detection pipelines.

Once those signals land inside the campaign data, the rest happens automatically. The bidding model notices higher engagement rates. The optimization engine increases exposure to that traffic source. Budget follows performance signals. Within a few hours the campaign can start allocating more spend toward the manipulated traffic.

That’s one path to large-scale ad spend manipulation.

Another weak point shows up in attribution systems. These models decide which ad interaction receives credit for a conversion. Fraud actors sometimes exploit this by triggering synthetic conversion events or intercepting attribution signals. The platform records the conversion. The optimization model reacts. Budget shifts again.

None of this necessarily looks suspicious at first glance.

Which is why AI security in AdTech has become less about filtering obvious bots and more about understanding how automated systems react to manipulated inputs. If those inputs aren’t validated carefully, subtle marketing AI vulnerabilities can push entire campaigns toward fraudulent traffic sources while performance reports still appear healthy.

5. Conclusion

Something interesting is happening inside advertising platforms right now.

For years, most conversations about ad fraud focused on traffic—bots, fake clicks, and suspicious IP ranges. But as campaign systems became more automated, the real leverage point shifted. Attackers don’t necessarily need massive botnets anymore. Sometimes, influencing a few key engagement signals is enough to change how campaign models behave.

That’s the uncomfortable part of automation.

Advertising algorithms are designed to react quickly to performance signals. When those signals improve, the system reallocates spending, adjusts bids, and expands reach. Under normal conditions that responsiveness makes campaigns more efficient. Under manipulated conditions, it can quietly redirect budget toward the wrong places.

Teams responsible for AI security in AdTech are starting to treat these systems less like marketing tools and more like critical infrastructure. The question isn’t only whether fraud exists in the traffic—it's how the models themselves respond when the inputs change.

That shift in thinking is one of the reasons AI red teaming in marketing is gaining attention. When organizations deliberately probe automated campaign systems, subtle marketing AI vulnerabilities tend to surface in ways traditional testing rarely reveals.

And as advertising platforms become more autonomous, understanding those behaviors will likely become just as important as detecting the fraud itself.

“When AI trusts the data blindly, attackers don’t need to break the system—they just need to guide it.”

.svg)

.png)

.svg)

.png)

.png)