AI Red Teaming in Payments : Securing Transaction AI and Anti-Fraud Engines

.png)

Key Takeaways

AI red teaming in payments focuses on how transaction AI fails under real adversarial pressure.

Traditional fraud model validation cannot detect adaptive AI-driven fraud strategies.

Payment fraud attacks are designed to appear normal, not suspicious.

AI security in payment systems requires continuous adversarial testing, not one-time reviews.

Proactive AI red teaming helps payment platforms prevent silent failures before financial and regulatory impact occurs.

1. Introduction

Swipe a card. Tap a phone. Click “Pay now.” Behind that tiny moment sits a stack of models making a judgment call.

Payment platforms rely on machine learning to decide whether a transaction should go through, be blocked, or be pushed into manual review. The decision happens in milliseconds. The model checks location patterns, device fingerprints, purchase history, merchant category, and velocity signals—dozens or sometimes hundreds of variables. Multiply that by millions of transactions every hour, and you start to see why these systems matter so much.

But here’s the uncomfortable reality: once a fraud model is live, attackers start studying it.

Fraud groups don’t just blast random stolen cards anymore. They probe systems carefully. Small transactions first. Different merchants. Slightly different device setups. If a transaction passes, they repeat it with minor adjustments. Gradually, they figure out where the system’s tolerance line sits. When they feel confident, they scale up.

This pattern has appeared repeatedly in digital commerce over the past few years. Synthetic identity rings, for instance, often run “rehearsal transactions”—small purchases meant purely to observe fraud model reactions. Once those test transactions succeed, larger payments follow. Investigators reviewing these campaigns often find the same thing: the defenses were there, but the attackers learned how to operate around them.

Situations like this are exactly why Payment AI security is becoming a strategic issue for payment companies. Fraud models that perform well in controlled environments may behave very differently when adversaries start interacting with them directly. Weak points in payment fraud detection AI rarely show up in standard testing—they surface when attackers actively search for them.

This is where AI red teaming in payments enters the picture. Instead of waiting for criminals to map those weaknesses, security teams deliberately simulate the same adversarial behaviors. The goal is simple: expose the cracks before someone else does.

2. How AI Powers Modern Payments

A modern “payment system” has only a few milliseconds to make a decision. When someone taps a card or confirms an online checkout, several AI systems quietly jump into action. Their job is simple in theory—decide whether the payment looks legitimate or suspicious. In practice, that decision depends on analyzing a large set of behavioral and transactional signals almost instantly.

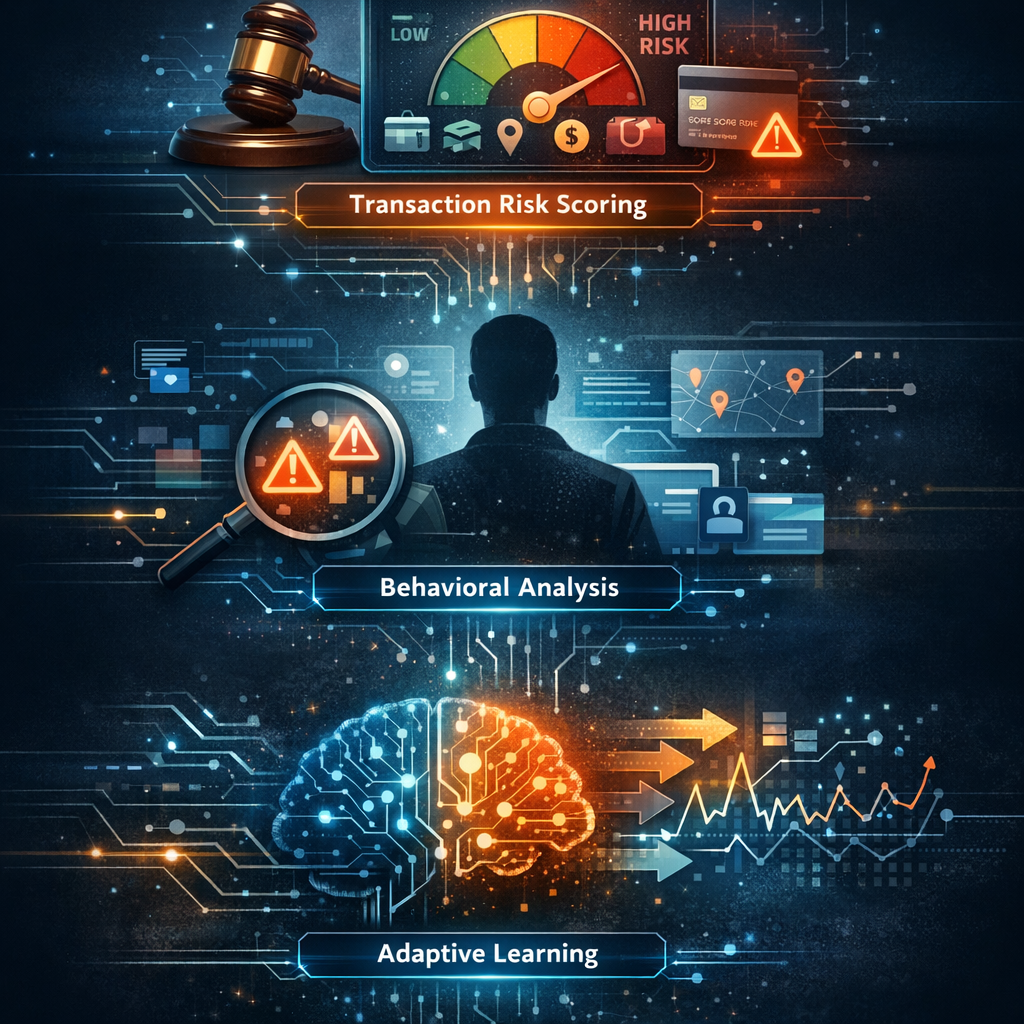

The first system most payment platforms rely on is a transaction risk scoring model. Think of it as a fast judge. The model reviews information such as the customer’s spending history, merchant category, device identity, location patterns, and purchase size. All of these signals combine to produce a risk score. If the score crosses a certain threshold, the system may block the payment or send it for additional verification.

Another layer focuses on behavioral patterns. These models watch how a user normally interacts with their account. Something as small as login timing, device switching, or unusual purchase sequences can signal suspicious activity. When these “behavioral signals” are combined with payment fraud-detection AI, platforms can catch account takeovers and automated fraud attempts that might otherwise look normal at first glance.

There is also a third layer that often receives less attention: adaptive learning systems. Fraud models cannot remain static because fraud tactics evolve quickly. Payment platforms periodically retrain models using recent transaction data so the system can recognize new fraud behaviors.

But this constant adaptation comes with its own challenge. As transaction patterns shift—” new merchants," “new payment channels," “changing customer behavior”—models can slowly lose accuracy. This gradual shift is known as AI model drift in payments, and it can quietly weaken detection systems if it goes unnoticed.

All these layers together form the backbone of Payment AI security. Yet each layer also introduces potential anti-fraud AI vulnerabilities, especially when attackers deliberately test the system’s boundaries.

3. AI Red Teaming Use Cases in Payments

People sometimes imagine fraud systems as a kind of digital fortress. In reality, they behave more like living systems that react to whatever data reaches them. If someone keeps interacting with that system long enough, patterns eventually show up. Fraud groups have understood this for years. They treat payment platforms almost like laboratories—running small tests, watching the results, then adjusting the next attempt.

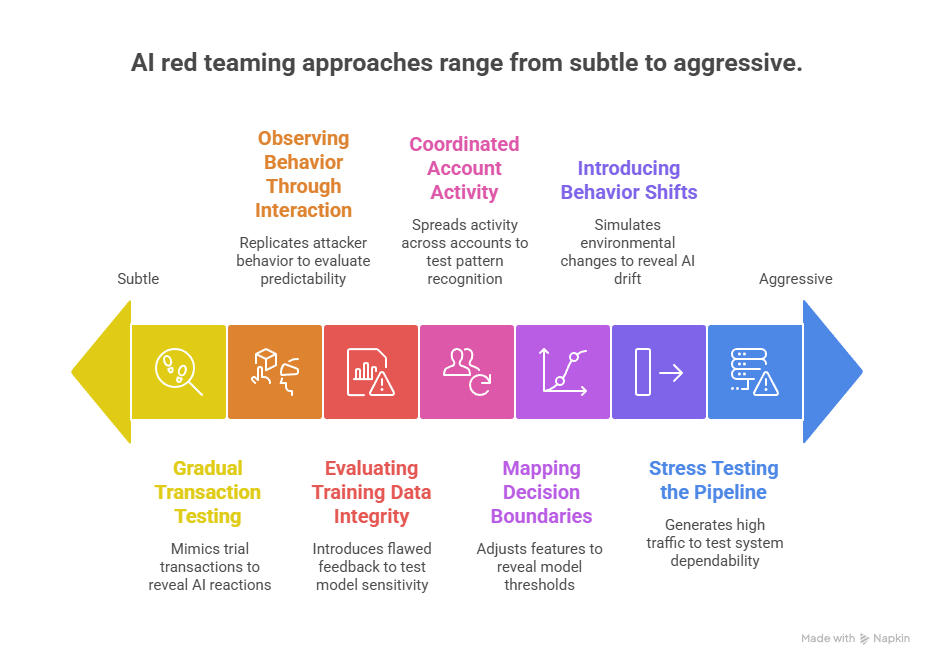

Teams performing AI red teaming in payments take a similar approach, except the experiments happen inside controlled environments. Instead of trusting that a fraud model will always behave correctly, they push it from different angles and observe how it responds.

3.1 Gradual Transaction Testing

A common fraud tactic begins with what investigators often call “trial transactions.” These are small purchases made simply to see whether a card or account will pass through the system. If nothing stops the payment, attackers repeat the process with slightly larger amounts.

Red teams mimic this pattern deliberately. They send a series of low-value payments, sometimes only a few dollars. After that, the transaction value slowly increases or the purchase moves to a different merchant. Timing might change as well—some payments occur seconds apart, others minutes or hours later.

Watching these patterns unfold can reveal how Payment fraud detection AI reacts to subtle changes in behavior. In some systems the model heavily weights transaction value. In others, location signals matter more. Discovering which signals dominate helps teams understand where attackers might find room to maneuver.

3.2 Mapping Decision Boundaries

Machine-learning models don’t explain their thinking directly. Instead, their behavior has to be inferred from outcomes. A payment is approved, another is declined, and eventually a rough picture begins to form.

Red teams explore this by adjusting one transaction feature at a time—perhaps the purchase amount, the merchant type, or the device used during checkout. The difference between two nearly identical transactions can show where the model’s decision threshold sits.

If those thresholds become predictable, attackers can operate just beneath them. That kind of probing is one way Adversarial attacks on fraud detection systems take shape in real payment environments.

3.3 Introducing Shifts in Transaction Behavior

Payment ecosystems evolve quickly. New payment apps appear, cross-border commerce grows, and seasonal events can double transaction volumes almost overnight. Models trained on older data sometimes struggle when those changes occur.

Red teams recreate those shifts by modifying transaction distributions in testing environments. For instance, they might simulate a surge in “online retail purchases” or generate patterns similar to new digital wallet usage.

The purpose isn’t to break the model outright. Instead, the exercise reveals how the system reacts when the environment moves away from what it originally learned. In many cases this highlights the early stages of AI model drift in payments, where detection performance gradually weakens.

3.4 Coordinated Account Activity

Large fraud operations rarely rely on a single account. Instead, activity is spread across many accounts so that each individual payment appears ordinary.

To evaluate this risk, red teams run coordinated scenarios. Several accounts might perform similar purchases across different merchants at roughly the same time. Some accounts start with harmless purchases before attempting larger transactions later.

These exercises show whether detection tools analyze transactions individually or recognize patterns across many accounts.

3.5 Evaluating Training Data Integrity

Fraud detection models learn from historical transaction data. If that data contains mistakes—incorrect fraud labels, delayed confirmations, or incomplete investigation results—the model may absorb misleading signals.

Red teams sometimes test these conditions in sandbox environments by introducing flawed feedback into training pipelines. The goal is to see how sensitive the model is to inaccurate information.

Situations like this often expose deeper “Anti-fraud AI vulnerabilities”, especially in systems that retrain models frequently.

3.6 Observing Behavior Through Repeated Interaction

Attackers don’t need access to the internal model to learn about it. By repeatedly interacting with a payment platform, they can observe which transactions succeed and which fail.

Red teams replicate this behavior in controlled testing. A sequence of similar payments is submitted with small variations in device information, purchase value, or merchant category. Over time, the system’s responses begin to reveal patterns.

Those patterns help security teams evaluate how predictable their Payment AI security controls might appear from an attacker’s perspective.

3.7 Stress Testing the Payment Pipeline

Payment systems sometimes behave differently when traffic suddenly increases. Think about large shopping days—Black Friday, festival sales, big online launches. Transaction requests can jump dramatically within minutes. Every request still needs to be checked, scored, and approved almost instantly.

Red teams recreate those busy moments inside testing environments. They generate large numbers of payment requests within a short time span and then watch how the system reacts. The focus is not on crashing the platform. Instead, analysts observe whether the fraud controls respond the same way they do during normal traffic.

Several things are monitored during these exercises. Response time is one. If the system slows down, some checks may be simplified so that customers are not kept waiting. Another point of attention is consistency. A transaction that would normally be flagged should still be flagged, even when the system is handling thousands of requests.

Running these simulations helps security teams understand whether their Payment AI security controls remain dependable when activity spikes. If detection behavior changes during busy periods, attackers might try to take advantage of those windows.

4. How Payment AI Fails Under Adversarial Pressure

Fraud systems often look reliable during internal testing. The environment is controlled, the data is familiar, and the system behaves exactly as expected. Problems usually appear later—when real attackers begin interacting with the system repeatedly.

Fraud groups rarely attempt one large transaction and hope for the best. They experiment first. One payment fails. Another succeeds. A few more attempts reveal how strict the system really is. After enough attempts, patterns become visible, and attackers begin shaping their activity around those patterns. Situations like this are where weaknesses in Payment AI security tend to surface.

Attack Pattern 1: Slow Fraud Evasion

Why do some fraudulent transactions pass through detection systems?

Attackers often begin with very small payments. These are sometimes called test purchases. The intention is simply to see whether the system allows the transaction.

If those small payments succeed, the next step is gradual escalation. The amount increases slightly, the merchant changes, or the purchase timing shifts. Instead of appearing suspicious, the activity looks similar to normal customer behavior.

Fraud models sometimes struggle with this type of gradual change. When a system depends too heavily on a few signals—such as transaction amount or location—attackers can learn how to operate around those signals. This is one situation where gaps inside Payment fraud detection AI may appear.

Attack Pattern 2: Coordinated Activity Across Accounts

Why can coordinated fraud campaigns avoid detection?

Many fraud detection tools examine each payment individually. Organized fraud groups take advantage of this by spreading activity across several accounts or cards.

A group may run dozens of small transactions at the same time using different accounts. Each payment appears harmless by itself, but together they form a clear pattern of abuse. Because the activity is distributed, traditional detection rules may not recognize it immediately.

When attackers study system behavior this way, they sometimes discover opportunities for adversarial attacks on fraud detection, where transaction patterns are intentionally shaped to avoid triggering alerts.

Attack Pattern 3: Detection Accuracy Weakening Over Time

Why do fraud models sometimes lose effectiveness?

Payment environments change constantly. Shopping behavior evolves, new merchants appear, and new payment methods become popular. A system trained on older transaction patterns may slowly become less effective when these changes occur.

Security teams sometimes notice this during long-term performance reviews. Transactions that would once have triggered alerts may begin passing through without warning.

This slow shift is commonly linked to AI model drift in payments, where the system’s understanding of normal activity gradually moves away from current transaction behavior.

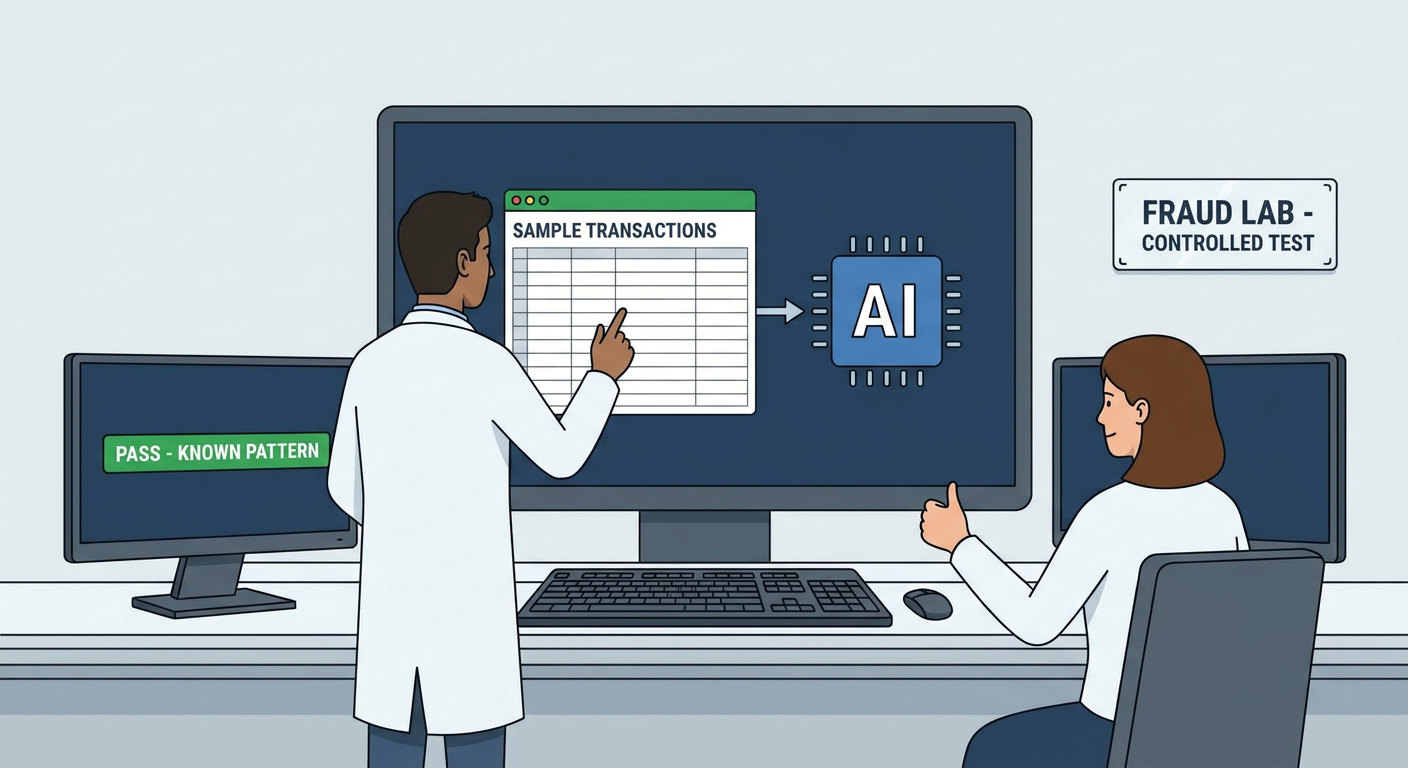

5. Why Traditional Testing Misses These Vulnerabilities

Fraud detection systems are usually tested in controlled environments. Engineers run sample transactions through the system, fraud teams check how the model reacts, and analysts review the results. If the system correctly identifies suspicious activity in those tests, the model is considered ready for deployment.

The problem appears later—when real people begin interacting with the system in ways the tests never anticipated.

Most traditional testing relies heavily on historical data. The system is evaluated using past fraud examples, which means the test mainly checks whether the model can recognize patterns that are already known. Fraud groups, however, rarely repeat those patterns exactly. They adjust small details—purchase timing, merchant categories, or transaction values—until the activity begins to resemble normal customer behavior.

Another issue comes from how transactions are examined during testing. Many tests focus on individual payments. A single transaction is evaluated to see whether it should be approved or declined. In real payment environments, fraud often develops slowly across many accounts and multiple merchants. When activity is spread out in this way, the broader pattern can remain hidden.

Payment ecosystems also change constantly. Shopping behavior evolves, new payment channels appear, and spending patterns shift across regions. Testing environments may not always capture these changes. When that happens, weaknesses related to Anti-fraud AI vulnerabilities can remain unnoticed.

For this reason, many organizations now explore approaches like AI red teaming in payments, where systems are examined under conditions that resemble real fraud attempts rather than predictable test scenarios.

6. Implications for Payment Organizations

Payment companies already know that fraud never stays still. The moment a defense becomes predictable, someone eventually finds a way around it. That is especially true for modern payment platforms, where automated systems decide whether a transaction moves forward or stops.

Because of that, simply deploying a detection model is not enough. Teams need to keep watching how the system behaves once it is running in production. If approval patterns start shifting or certain types of transactions suddenly pass more often than expected, it can signal that the system is reacting differently to current payment activity.

Another useful step is testing systems under situations that rarely appear in normal checks. For example, what happens if many accounts attempt similar purchases at nearly the same time? Or when transaction activity suddenly increases during large online sales events? Running these kinds of exercises can reveal problems that routine testing never exposes.

Data quality also matters more than many teams expect. Fraud systems learn from past transaction records and investigation results. When those records are incomplete or delayed, the system can slowly begin relying on inaccurate signals. Over time, this may lead to hidden Anti-fraud AI vulnerabilities that are difficult to notice during ordinary reviews.

Some organizations deal with this by running structured security exercises where internal teams attempt to bypass fraud controls in controlled environments. Efforts like AI red teaming in payments allow companies to see how their defenses behave when someone is actively trying to get around them. These exercises provide a clearer picture of how resilient Payment AI security controls are in real-world conditions.

7. Conclusion

Payment systems run quietly in the background of everyday commerce. A customer taps a card or confirms an online purchase, and within moments the transaction is either accepted or blocked. Most people never see the layers of checks that happen in that brief window. When those checks work well, fraudulent activity is stopped without interrupting normal payments.

The challenge is that fraud does not follow a script. People looking for weaknesses in payment platforms usually start small. They try a low-value transaction, watch the outcome, and then try something slightly different. Sometimes the merchant changes. Sometimes the device changes. Over time these repeated attempts can reveal how a system reacts. In certain situations, those experiments expose gaps inside Payment fraud detection AI.

That is why payment companies cannot treat fraud detection as a set-and-forget system. Transaction behavior shifts constantly. Shopping habits change, new merchants appear, and new payment channels become common. If detection systems are not reviewed regularly, they can slowly fall out of step with real payment activity.

To deal with this, many organizations run controlled security exercises where internal teams deliberately test how payment systems respond to unusual transaction patterns. These activities are often connected to AI red teaming in payments, where specialists interact with the system in ways that resemble real fraud behavior. The purpose is simple: learn how the system reacts before someone outside the organization does. Findings from these exercises help teams strengthen Payment AI security and improve how payment platforms respond when suspicious activity appears.

“Payment AI doesn’t fail when it’s inaccurate, it fails when attackers learn how to stay invisible.”

.svg)

.png)

.svg)

.png)

.png)