AI Red Teaming in Telecommunications: Protecting AI-Driven Network and Customer Systems

.png)

Key Takeaways

🢒 AI red teaming in telecommunications focuses on how AI systems fail under misuse, not just how they perform.

🢒 Traditional validation cannot uncover adversarial and behavioral AI risks in telecom environments.

🢒 High-risk telecom AI systems must be tested using realistic adversarial scenarios.

🢒 Silent AI failures create a larger business impact than visible outages.

🢒 Continuous AI red teaming is essential for scalable and resilient telecom AI security.

1. Introduction

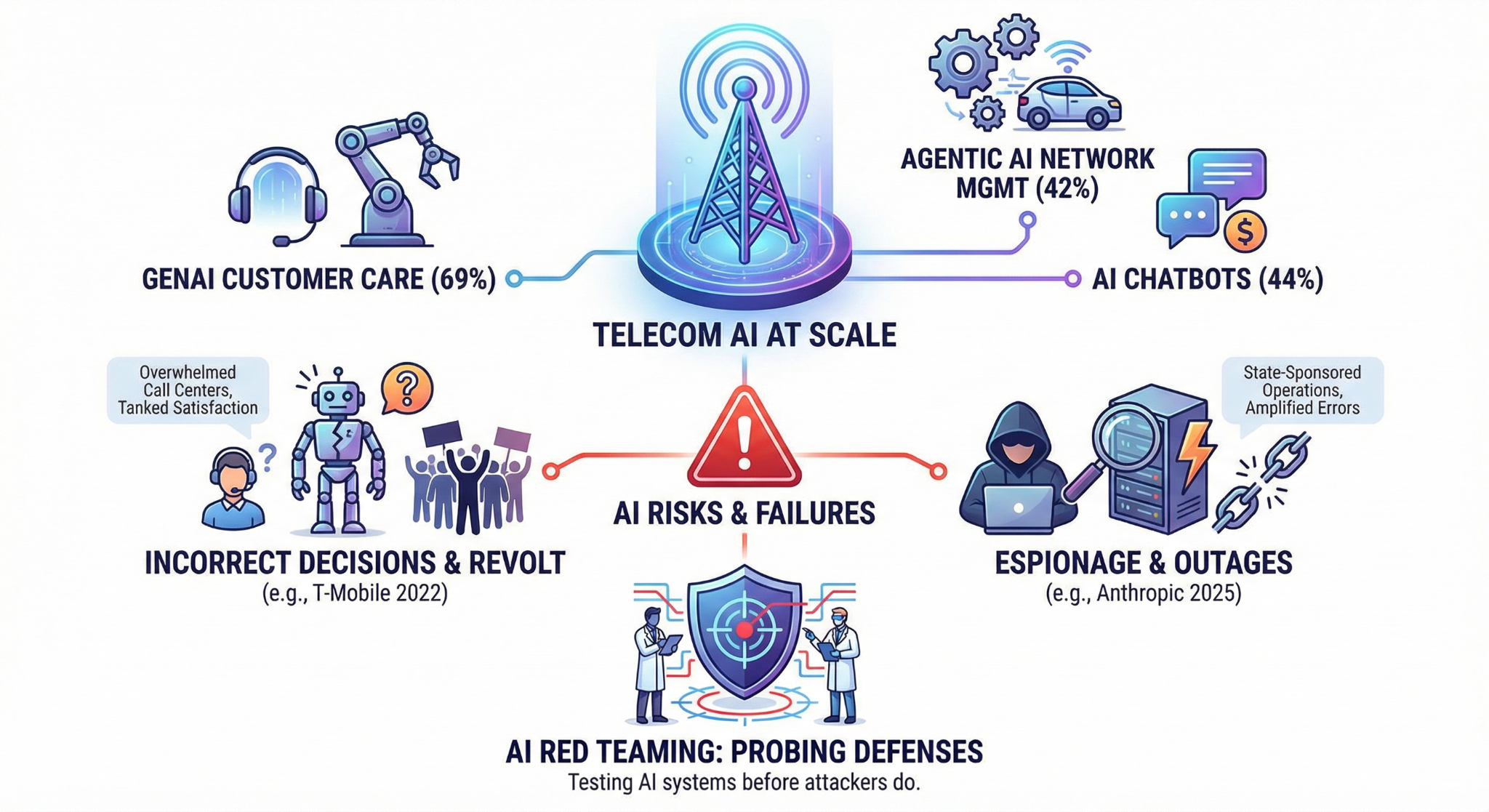

Telecom operators are running AI at a scale most industries can't match. Generative AI is live in customer care for 69% of telecoms. 42% have deployed agentic AI for autonomous network management. And 44% use AI-powered chatbots that handle account changes, billing, and troubleshooting without a human ever stepping in. These systems aren't experimental, they're making real decisions about real subscribers, millions of times a day.

But what happens when those AI systems start making the wrong decisions, and nobody catches it until customers revolt?

In 2022, T-Mobile's AI-driven customer service system started delivering incorrect and irrelevant responses due to a flaw in its algorithm. The failure overwhelmed human call centers, tanked customer satisfaction, and forced a reactive scramble to fix what should have been caught in testing. And Kaspersky's 2025 telecom security bulletin warned that AI-assisted network management is already amplifying configuration errors — automation acting on manipulated or noisy data can trigger outages operators didn't see coming.

The risks go deeper than broken chatbots. In 2025, Anthropic disclosed it had detected and disrupted the use of AI in espionage operations targeting critical telecommunications infrastructure, activity consistent with Chinese state-sponsored operations. When adversaries are using AI to attack telecom, testing those AI defenses isn't optional.

That's the core purpose of AI red teaming in telecommunications, probing the AI systems telecom companies depend on before attackers do.

2. How AI Powers Telecommunications

Telecom AI touches almost everything a subscriber interacts with. Three systems carry the heaviest load, and the highest risk.

Network Optimization AI

Machine learning models now manage traffic routing, predict congestion, and handle 5G network slicing without waiting for an engineer to sign off. AT&T's system processes 15 million network alarms daily. Verizon uses Google Cloud AI for predictive maintenance that's cut network downtime by 25%. This stuff runs at millisecond speed, which is great until the model gets fed bad data. At that point, the same automation that keeps networks stable can just as easily take them down.

Fraud Detection AI

The industry lost $38.95 billion to fraud in 2023. AI is how carriers fight back. These systems scan call patterns, SIM activity, and billing anomalies to catch threats as they emerge. Bharti Airtel's anti-spam engine flags 8 billion spam calls and identifies nearly 1 million spammers daily. 70% of telecom operators now rely on AI for fraud prevention. But here's the problem, these models learn from historical data. Manipulate the training data, and the model starts ignoring the threats it was built to catch.

Customer Service AI

Chatbots handle over 60% of customer inquiries at leading telecom firms. Vodafone's TOBi chatbot improved customer "experience" by 68%. These bots manage account changes -- billing disputes, and troubleshooting, all while pulling from live subscriber databases. Every single conversation is a direct line to sensitive data, making each one a potential entry point for AI prompt injection telecom attacks.

3. AI Red Teaming Use Cases in Telecommunications

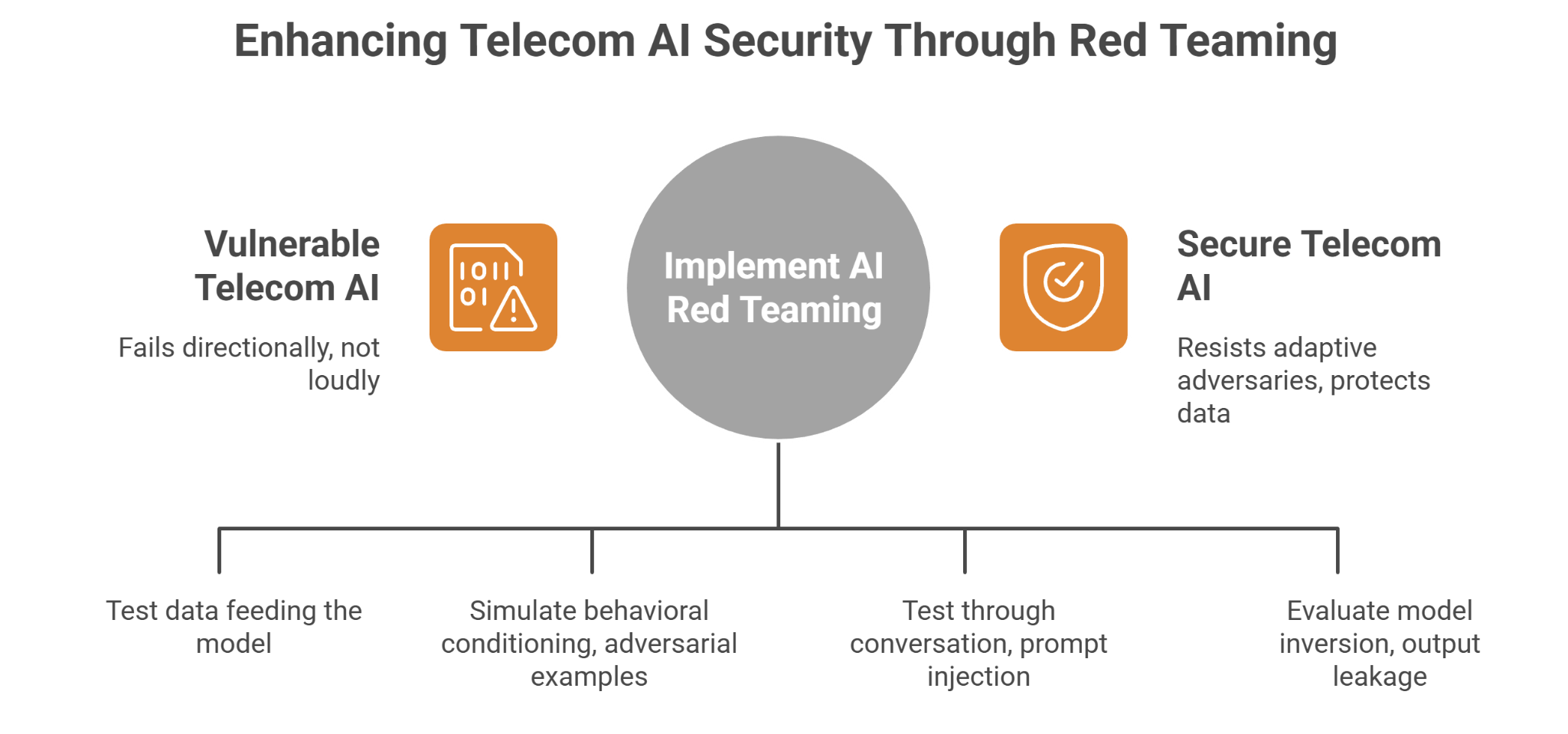

Telecom AI doesn’t fail loudly. It fails directionally. Slightly wrong routing. Slightly delayed fraud flags. Slightly over-permissive customer bots. That’s what makes red teaming necessary.

Here’s what actually gets tested.

3.1 Manipulating Network Optimization AI Without Touching the Core

Modern carriers run self-optimizing networks. Traffic rerouting, congestion prediction, 5G slicing — all automated.

Red teams don’t attack the router. They test the data feeding the model.

In one common scenario, we simulate gradual traffic distortion. Not spikes — those get flagged. Instead, low-amplitude anomalies distributed across cells. The goal is simple: can the model be nudged into reallocating capacity inefficiently? Can it be trained to normalize abnormal latency?

We also test telemetry integrity. If upstream monitoring data is partially corrupted — say, synthetic congestion signals injected into analytics feeds — does the AI question it, or act immediately?

The uncomfortable finding: most optimization models assume telemetry is clean. They validate performance, not intent. That’s where AI vulnerabilities in telecom begin.

3.2 Teaching Fraud Models to Ignore Fraud

Fraud detection AI is tuned for speed. It has to be. SIM swapping, international revenue share fraud, subscription abuse — decisions must happen in milliseconds.

Red teaming flips the perspective.

Instead of launching a single SIM swap attempt, we simulate behavioral conditioning. Gradual pattern shifts in call behavior. Small billing anomalies over weeks. Testing whether the model recalibrates its baseline and begins accepting what used to be suspicious.

We also test adversarial examples — inputs engineered to sit just under detection thresholds.

This is where AI model manipulation becomes real. If a fraud model updates continuously from production data, poisoning doesn’t require admin access. It requires patience.

Most carriers discover their models are excellent at detecting yesterday’s fraud. Less so at resisting adaptive adversaries.

3.3 Breaking Customer AI With Conversation Alone

Customer service bots are different. They sit directly on subscriber records — account changes, SIM reissues, billing adjustments.

Red teams test them the way attackers do: through conversation.

Multi-turn prompt injection. Escalation phrasing. Instruction override attempts disguised as troubleshooting. We try to extract internal system prompts. We test whether context windows leak previous session data.

This is where AI prompt injection telecom risks surface fast.

If a chatbot prioritizes helpfulness over constraint, it can be socially engineered through its own interface. No malware. No exploit kits. Just text.

3.4 Extracting Signal From Subscriber Intelligence Models

Churn prediction models. Usage scoring. Location behavior clustering. These systems are trained on massive volumes of IMSI, IMEI, and metadata.

Red teams evaluate model inversion and output leakage risks. Repeated querying to infer training distributions. Observing how confidence scores change when specific behavioral attributes are toggled.

The question isn’t “Is the database secure?”

It’s “What can be reconstructed from the model itself?”

This is where AI red teaming in telecommunications moves beyond infrastructure security. It treats the model as the attack surface.

4. How Telecom AI Actually Breaks Under Pressure

Telecom AI doesn’t implode. It makes confident, slightly wrong decisions. And those small errors scale fast.

Let’s look at how that happens in the real world.

4.1 When a Chatbot Becomes an Access Layer

Customer bots are wired into billing systems, SIM status tools, service configuration panels. They’re supposed to interpret intent. That’s the problem.

Attackers don’t send “hack” commands. They escalate gradually. A billing question becomes a verification request. A verification request becomes a SIM reissue query. Context builds.

Then they test boundaries:

⇀ Can the bot be persuaded to summarize internal policy?

⇀ Does it leak system prompts?

⇀ Will it override a restriction if framed as an urgent service disruption?

Under pressure, many bots prioritize helpfulness. And if the system prompt hierarchy isn’t airtight, conversational steering works.

That’s how AI prompt injection telecom risk shows up, not as malware, but as text that reshapes model behavior mid-session.

4.2 When Network AI Trusts the Wrong Signals

Self-optimizing networks are fast. Millisecond routing decisions. Automated load balancing. Predictive congestion management.

But they assume the telemetry feeding them is clean.

We’ve seen scenarios where slightly distorted latency metrics cause traffic redistribution that compounds the original issue. No dramatic spike. No obvious anomaly. Just incremental signal drift.

Automation amplifies what it believes. If the data layer is manipulated — or even just noisy — the model adapts in the wrong direction.

These kinds of AI vulnerabilities in telecom don’t look like attacks in logs. They look like the system doing its job.

That’s what makes them dangerous.

4.3 Fraud Models and the Slow Threshold Game

Fraud AI is designed to flag outliers. So attackers stop being outliers.

Instead of a sudden SIM swap, they simulate behavioral aging. Gradual pattern changes in usage. Small billing adjustments. Timing shifts.

The goal is to teach the model a new normal.

If the system retrains frequently, that conditioning effect becomes stronger. The model adapts to the manipulated baseline.

That’s practical AI model manipulation — and it doesn’t require access to source code. Just persistence.

Under adversarial pressure, telecom AI doesn’t crash. It complies. It recalibrates. It optimizes in the wrong direction.

That’s why AI red teaming in telecommunications focuses on how systems behave when someone is actively trying to steer them — not when they’re operating under clean lab conditions.

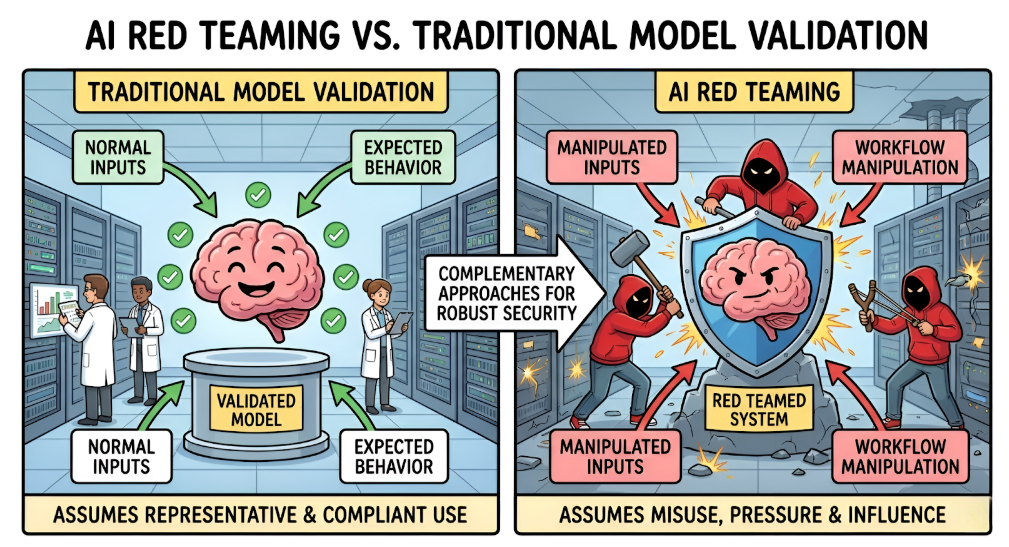

5. Why Traditional Testing Misses These AI Vulnerabilities

Most telecom security programs are still built around infrastructure: firewalls, endpoints, access control, signaling abuse. That’s necessary — but it doesn’t touch the decision layer.

Why doesn’t penetration testing catch these issues?

Because pen testing looks for entry points into systems. AI failures often happen after access is already legitimate. A chatbot responding incorrectly. A fraud model recalibrating in the wrong direction. A network optimizer acting on distorted telemetry. Nothing is “breached” in the traditional sense — the system simply behaves as designed, under manipulated conditions.

Why don’t compliance audits flag the risk?

Frameworks evaluate controls, not model behavior under adversarial input. They verify encryption, logging, role-based access. They rarely test how a model reacts to prompt injection, slow data poisoning, or boundary mapping.

Why are these telecom network AI risks growing?

Because models update. Data shifts. Deployment expands faster than oversight. Without structured AI red teaming in telecommunications, the AI layer evolves while security assumptions stay static.

Telecom has matured its network defense posture over decades. AI introduces a different kind of surface — one that learns, adapts, and can be steered.

Traditional testing wasn’t built for that.

6. Implications for Telecom Organizations

If AI is making routing, fraud, and subscriber decisions autonomously, then it must be treated as its own attack surface — not as an extension of existing network security.

First: Separate AI risk from infrastructure risk.

Firewalls don’t protect against behavioral manipulation. Create a dedicated testing stream for models powering network optimization, fraud detection, and customer systems. That means structured AI red teaming in telecommunications, not just vulnerability scans.

Second: Test continuously, not annually.

Fraud models retrain. Chatbots update. Network AI absorbs new telemetry daily. Each update shifts exposure. Static testing cycles create blind windows where AI vulnerabilities in telecom go unnoticed.

Third: Map AI deployments to formal governance.

Tie model risks to NIST AI RMF and OWASP LLM guidance. Make telecom AI governance a board-level discussion — not an innovation footnote.

Finally: Assume adversaries are already experimenting.

The question isn’t whether telecom AI can be manipulated. It’s whether you’ll discover it internally — or in production.

7. Conclusion

Telecom AI is no longer experimental. It routes traffic, blocks fraud, approves SIM changes, and shapes subscriber experience in real time. That makes it infrastructure.

The problem isn’t that these systems fail loudly. It’s that they adapt under pressure — sometimes in the wrong direction.

Traditional controls secure the perimeter. They don’t test how models behave when someone is actively trying to steer them.

That’s why AI red teaming in telecommunications isn’t a future investment. It’s operational hygiene for carriers deploying AI at scale.

Attackers will probe these systems. The only question is whether you probe them first.

“Telecom AI rarely breaks the network, it quietly breaks trust, revenue, and reliability.”

.svg)

.png)

.svg)

.png)

.png)