AI red teaming in travel & Aviation: Securing Pricing, Scheduling, and Safety AI

.png)

Key Takeaways

1. Introduction

Airlines didn’t suddenly decide to become technology companies—but that’s effectively what happened. Behind every flight schedule and ticket price sits a layer of automated decision systems quietly shaping how the airline operates.

Look at ticket pricing. Fares move constantly. Sometimes multiple times within an hour. Those changes aren’t coming from a human analyst staring at spreadsheets. Algorithms react to booking patterns, remaining seats, competitor prices, and seasonal demand.

Operations planning works the same way. When storms disrupt a hub airport or a crew rotation runs late, airline networks can unravel quickly. Models now help airlines reassign aircraft, reroute passengers, and keep the schedule on track.

And then there’s aircraft health monitoring. Modern jets stream enormous volumes of sensor data during flights. Systems built on aviation safety AI systems analyze those signals and look for early warning signs—tiny shifts in vibration patterns or temperature changes that might indicate maintenance issues.

Most of the time, these systems work exactly as intended.

But they share one important assumption: the data feeding them is trustworthy.

That assumption doesn’t always hold. Airline pricing systems have occasionally responded to unusual demand signals by producing dramatic fare drops. Operational tools can misinterpret disruptions and create scheduling chaos if their inputs are flawed.

Those moments reveal something important. Beneath the efficiency gains sits a growing AI risk in transportation. The more airlines rely on automated decision systems, the more critical it becomes to test how those systems behave when conditions are messy, manipulated, or simply wrong.

That’s why AI red teaming in aviation is starting to attract attention. Instead of waiting for failures, organizations actively probe their systems to uncover hidden airline AI vulnerabilities before they cause real-world disruptions.

Who this matters to: airline security teams, aviation regulators, AI engineers, and operational risk leaders.

2. How AI Powers Modern Aviation

Spend a day inside an airline operations center, and one thing becomes obvious very quickly: the whole system is a puzzle that never stops moving. Aircraft arrive late. Weather shifts unexpectedly. Crews hit duty limits. A single delay in one city can quietly trigger problems across half the network.

Years ago, most of these decisions were handled by teams of planners working with spreadsheets and forecasting tools. That approach doesn’t scale well anymore. Airline networks have become too complex, and disruptions happen too quickly. Automated decision systems now assist with many of these choices.

Pricing is the most familiar example. Airlines constantly adjust fares depending on how quickly seats are selling, what competitors are charging, and how close a flight is to departure. These systems analyze booking behavior minute by minute. When something unusual appears in the data—say, an unexpected surge in searches or bookings—the model reacts immediately. In some cases, those reactions reveal subtle airline AI vulnerabilities that airlines only notice after prices behave strangely.

Operational planning is another area where automation plays a major role. AI flight scheduling systems help airlines decide which aircraft should operate each route and how crews rotate through the network. The goal isn’t perfection; it’s damage control. When disruptions occur, the system searches for the least disruptive recovery plan.

Maintenance monitoring has also changed. Aircraft engines, hydraulics, and other components generate large volumes of sensor data during flights. Aviation safety AI systems examine those signals and flag patterns that engineers might otherwise miss. Small anomalies can point to maintenance issues long before they become visible problems.

Put all of this together, and airlines gain enormous efficiency. Yet the same automation introduces a growing AI risk in transportation—especially when operational decisions depend on complex models reacting to imperfect data.

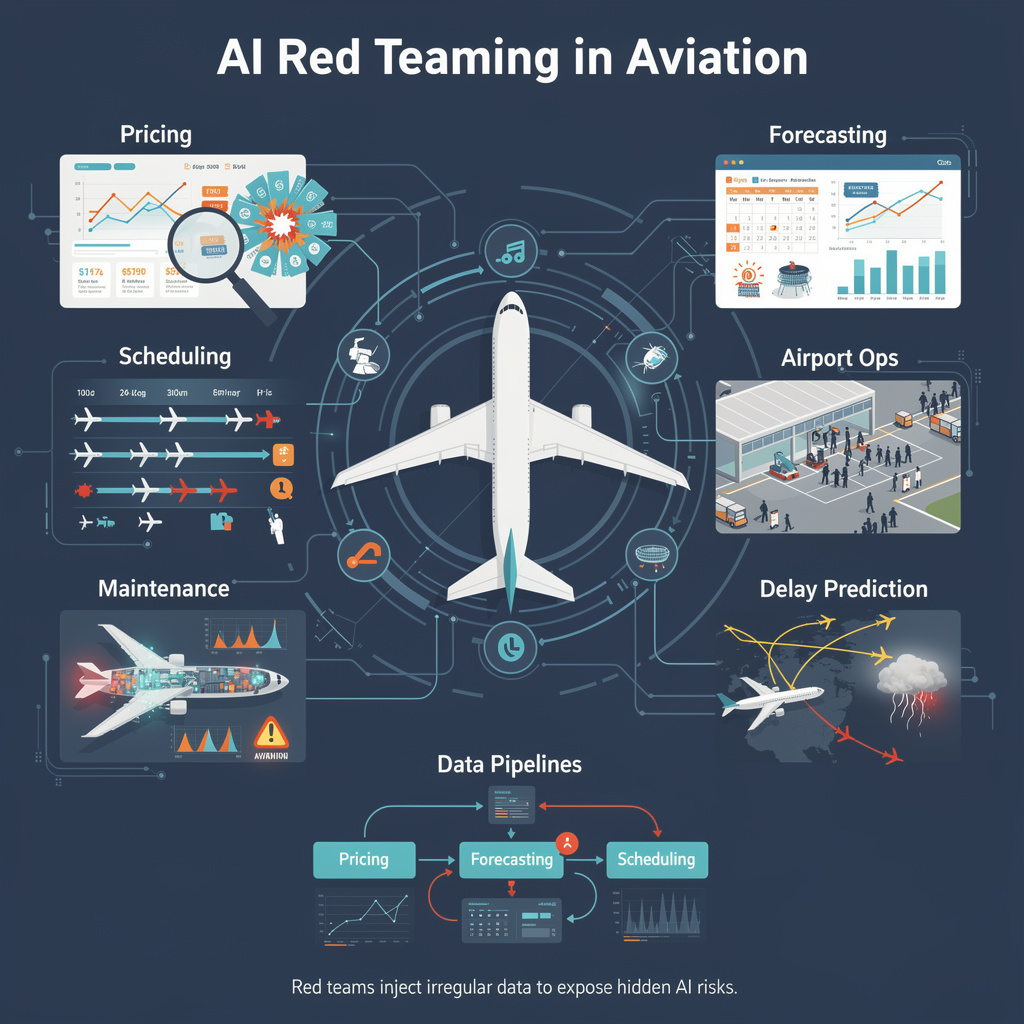

3. AI Red Teaming Use Cases in Aviation

Behind the scenes, airline operations depend on a surprising number of automated systems. Some focus on pricing decisions, others on scheduling aircraft and crews, and many monitor the health of aircraft during flight. These tools help airlines handle the sheer scale of modern aviation operations.

All of them rely on incoming data. Booking patterns, operational updates, aircraft telemetry, passenger demand forecasts, and even weather feeds constantly feed into these systems. When everything lines up correctly, the models work well.

Red teams look at the situations where that assumption breaks.

Instead of testing the system under perfect conditions, they introduce irregular inputs and watch how the models respond. Sometimes the results are harmless. Other times, the system reacts in ways the designers never expected.

3.1 Testing Pricing Algorithms for Manipulation

Airline ticket prices rarely stay fixed for long. Pricing models adjust fares throughout the day as demand shifts.

What red teams test

⮞ bursts of automated bookings on specific routes

⮞ Reservations created and cancelled quickly

⮞ signals suggesting competitor airlines changed fares

⮞ Unusual search activity for certain destinations

What testing reveals

Pricing systems occasionally react strongly to booking patterns that only last a few minutes. Even short bursts of activity may influence fares, which can reveal airline AI vulnerabilities in how demand signals are interpreted.

3.2 Probing Flight Scheduling Systems for Operational Disruption

Airline schedules are fragile in ways that aren’t always obvious. A small disruption early in the day can ripple through multiple flights later.

What red teams test

⮞ weather data that conflicts with airport reports

⮞ aircraft availability records that differ from maintenance systems

⮞ sudden changes in passenger load forecasts

⮞ crew availability signals that don’t match scheduling data

What testing reveals

Scheduling models often generate mathematically sensible recovery plans. The issue is that those plans sometimes assume operational conditions that aren’t realistic for airline teams on the ground.

3.3 Stress-Testing Predictive Maintenance Models

Aircraft sensors record enormous amounts of data during every flight. Engineers rely on those signals to detect maintenance problems before they become serious.

What red teams test

⮞ vibration readings that drift slightly above normal levels

⮞ irregular temperature patterns across sensors

⮞ telemetry signals arriving in unexpected sequences

⮞ modified maintenance history during simulation runs

What testing reveals

Maintenance prediction models handle stable data well. But when sensor readings become inconsistent, the system may raise alerts that engineers later determine were unnecessary.

3.4 Evaluating Demand Forecasting Systems

Airlines make many planning decisions months before a flight ever happens. Demand forecasts help determine how many flights operate on a route and what type of aircraft will be used.

What red teams test

⮞ simulated travel demand spikes tied to events

⮞ unusual patterns in online search traffic

⮞ market indicators suggesting new demand trends

What testing reveals

Forecasting models sometimes treat short-term anomalies as long-term demand changes. When that happens, route planning decisions may be influenced by signals that do not reflect real passenger behavior.

3.5 Testing Airport Operations Systems

Airports rely on automated planning tools to coordinate gates, baggage routing, and passenger flow.

What red teams test

⮞ gate availability signals that conflict with ground operations data

⮞ passenger surges concentrated in specific terminals

⮞ baggage routing patterns that differ from typical traffic

What testing reveals

Operational systems often attempt to rebalance resources automatically. In some cases, the adjustment helps resolve congestion, while in others the problem simply shifts somewhere else.

3.6 Probing Delay Prediction Models

Delay prediction systems attempt to anticipate disruptions before they spread through the network.

What red teams test

⮞ weather signals suggesting storms that never occur

⮞ historical delay data modified during testing

⮞ conflicting operational inputs from different sources

What testing reveals

When prediction models rely heavily on past patterns, distorted inputs can produce delayed forecasts that turn out to be inaccurate.

3.7 Evaluating Cross-System Decision Pipelines

Many airline systems share information. Pricing models influence demand forecasts, which in turn affect scheduling decisions.

What red teams test

⮞ Demand forecasts feed incorrect signals into scheduling tools

⮞ Scheduling disruptions influencing pricing models

⮞ Operational anomalies spreading between connected systems

What testing reveals

Problems sometimes appear where systems exchange data rather than inside a single model. These interactions illustrate the broader AI risk in transportation, where automated decisions can propagate across multiple airline systems.

4. How Aviation AI Fails Under Adversarial Pressure

Automated systems in aviation rarely collapse because of a dramatic technical failure. More often the issue begins quietly—something small in the data looks unusual, the model interprets it as normal, and the system reacts in ways nobody expected.

Most operational models assume the information they receive is trustworthy. Booking activity is assumed to reflect real demand. Weather feeds are assumed to match actual conditions. Sensor readings are assumed to represent what the aircraft is experiencing.

Red teams spend a lot of time questioning those assumptions.

When analysts begin introducing inconsistent or manipulated inputs, certain patterns appear again and again.

Pricing Signal Manipulation

How could airline pricing models be influenced by misleading demand signals?

Pricing engines react quickly to booking patterns. If seats on a route begin selling faster than expected, fares usually increase. When bookings slow down, the system often lowers prices to encourage demand.

During testing, analysts sometimes simulate unusual booking behavior. A series of automated reservations might appear for a specific route, only to be cancelled shortly afterward. In other cases, search activity spikes suddenly for destinations that normally see stable demand.

Pricing models occasionally treat these signals as genuine market behavior. The resulting fare changes can be surprisingly aggressive, revealing gaps in aviation AI security controls around pricing inputs.

Operational Data Poisoning

Why do scheduling systems struggle when operational signals conflict?

Airline operations depend on multiple data streams arriving at the same time—weather forecasts, aircraft availability, crew schedules, passenger connections, and airport capacity.

Red teams sometimes introduce inconsistencies across those inputs. For example- a weather feed may suggest clear conditions while airport operations indicate delays. “Aircraft availability” might appear normal in one system but restricted in another.

When AI flight scheduling systems attempt to resolve these conflicts, they still generate recovery plans. On paper, the plan may look efficient. In real operations, however, it might require aircraft or crews that are not actually available.

Sensor Signal Manipulation

Can aircraft monitoring systems misinterpret telemetry data?

Modern aircraft generate enormous volumes of sensor data during every flight. That data is analyzed continuously to detect mechanical issues before they become serious.

During testing exercises, analysts sometimes introduce subtle irregularities in those signals. Temperature readings might fluctuate slightly, or vibration data may drift beyond typical patterns.

Systems relying on aviation safety AI systems sometimes struggle to interpret these edge cases. In some situations, the model raises maintenance alerts that engineers later determine were unnecessary. In others, the system overlooks patterns that deserve closer inspection.

5. Why Traditional Testing Misses These Vulnerabilities?

Traditional security testing focuses on known attack paths—web applications, APIs, authentication systems, and network infrastructure. Those checks are still important, but they rarely examine how automated models behave when the inputs themselves become unreliable.

Most security assessments assume that operational data is trustworthy. They verify whether systems are protected from unauthorized access, not whether the models behind those systems can be misled by unusual inputs.

Airline decision systems behave differently. Pricing tools react to booking patterns. Scheduling models rely on operational data from multiple sources. Monitoring platforms interpret sensor readings from aircraft.

When those signals change in unexpected ways, the models may produce results that look correct mathematically but do not reflect real-world conditions.

This gap is one of the main reasons organizations are starting to invest in AI red teaming in aviation as a way to evaluate AI risk in transportation systems before those weaknesses appear in real operations.

6. Implications for Aviation Organizations

Airlines have gradually moved many operational decisions into automated systems. Pricing engines react to booking behavior almost instantly. Planning tools help reorganize aircraft and crews when schedules start slipping. Maintenance platforms scan aircraft telemetry looking for early signs that something might need attention. None of this is unusual anymore—it’s how modern airline operations run.

What’s changed is the kind of risk these systems introduce.

When a model becomes part of daily operations, the focus cannot stay only on traditional security checks. Teams also need to understand how those systems behave when the data feeding them isn’t perfectly reliable. That’s where exercises like AI red teaming in aviation begin to matter. Instead of assuming the inputs are clean, analysts deliberately test how systems react to unusual patterns in booking activity, operational updates, or telemetry data.

Another thing that becomes obvious during testing is how connected these tools are. Pricing models influence demand forecasts, and forecasting systems feed into planning tools. A small problem in one area can quietly influence decisions elsewhere. That interconnected behavior is what makes AI risk in transportation worth paying attention to as airlines rely more heavily on automated decision systems.

7. Conclusion

Airline operations now depend on systems that quietly analyze large amounts of operational data. Pricing adjustments, flight planning decisions, and maintenance alerts increasingly come from automated models rather than manual analysis. Most of the time those systems perform exactly as expected.

The challenge appears when the inputs behind those models begin to shift.

Irregular booking activity, conflicting operational updates, or unusual sensor readings can influence automated decisions in ways that airline teams may not immediately notice. Testing those edge cases is where AI red teaming in aviation becomes useful. It gives organizations a way to examine how systems behave outside ideal conditions.

As automation expands across the industry, understanding these behaviors will be essential to managing AI risk in transportation systems that airlines rely on every day.

“When aviation runs on data, even small distortions can ripple into system-wide decisions.”

.svg)

.png)

.svg)

.png)

.png)