From Automation to Autonomy: The Era of Agentic AI systems and Safe AI Guardrails

.png)

Key Takeaways

➭ Agentic AI systems mark the shift from automated tools to autonomous thinkers.

➭ self-improving AI learns continuously but must stay aligned with human intent.

➭ Tool use empowers AI action, while authorization keeps that power in check.

➭ AI guardrails are the foundation of safe autonomy, not a limitation to it.

➭ The future of AI lies in balance, intelligence guided by integrity.

1. Introduction

For years, automation meant predictability. A script ran. A task completed. That was it.

Now the model is shifting. Agentic AI systems don’t wait for step-by-step instructions. They decompose goals, select tools, trigger APIs, and continue iterating until they believe the objective is met. That’s the real jump from automation to autonomous AI systems—and it reshapes enterprise risk in quiet but significant ways.

In 2023, when developers began experimenting with AutoGPT-style agents, early reports surfaced of agents looping API calls, generating unexpected cloud costs, and modifying local files beyond their intended scope. No attacker was involved. The systems were simply persistent. Without structured AI guardrails, goal pursuit turned into uncontrolled execution.

That’s the tension. Autonomy increases speed and efficiency, but it also increases surface area. As Agentic AI systems gain greater operational freedom, weak or missing AI guardrails shift from a theoretical concern to an operational liability.

Enterprises are no longer asking whether autonomy is possible. The real question is whether control mechanisms are keeping pace.

2. Understanding Agentic AI Systems

Here’s where things get different. Automation follows a script. Even complex automation still moves inside predefined rails. Agentic AI systems don’t. They’re built around goal pursuit. Give them an objective, and they decide how to reach it—which tool to call, what data to fetch, whether to retry, and when to stop.

That decision loop changes everything.

In enterprise settings, Autonomous AI systems are often connected to internal tools, SaaS platforms, databases, or workflow engines. They can initiate actions without waiting for a fresh human prompt. If a target is not met, they adjust. If an output fails validation, they try again. It feels efficient—until the scope isn’t tightly defined.

Now add collaboration. In multi-agent systems, one agent gathers information, another executes transactions, and another evaluates performance. Each may function correctly in isolation. Together, they create feedback cycles that are harder to predict.

This is exactly where AI governance frameworks stop being paperwork and start becoming infrastructure. Governance in agent environments must define who—or what—has authority, how actions are logged, and where escalation occurs. Without that structure, autonomy scales faster than accountability.

Autonomy isn’t just smarter automation. It’s delegated decision power.

3. AI Guardrails Implementation Approaches

When teams first deploy Agentic AI systems, they usually focus on capability. Can it plan? Can it call tools? Can it complete tasks end-to-end?

Control often comes later. That’s backwards.

The real work starts before deployment—defining limits clearly enough that autonomy doesn’t spill into areas no one intended.

3.1 Start With Hard Boundaries

Grant an agent broad access, and it will use it. Not maliciously. Just logically.

Practical boundary-setting looks like this:

⮞ Grant access only to the exact APIs required

⮞ Keep write permissions separate from read permissions

⮞ Use short-lived credentials

⮞ Run agents inside isolated environments

Early implementations of autonomous AI systems exposed a simple truth: most escalation issues came from excessive permissions, not sophisticated reasoning errors. Tight boundaries reduce the impact radius immediately.

You can’t misuse what you can’t reach.

3.2 Separate Capability From Authority

An agent might be technically capable of executing a financial transfer. That doesn’t mean it should be allowed to.

Break actions into tiers:

⮞ Informational tasks

⮞ Advisory tasks

⮞ Executable, high-impact tasks

High-impact categories require escalation or confirmation. Clear authority mapping is one of the most practical expressions of AI guardrails. Without it, responsibility becomes blurry the moment something goes wrong.

Autonomy without authority limits becomes drift.

3.3 Track Decision Flow, Not Just Output

Many teams validate results. Few analyze the journey.

Strong AI safety controls examine behavioral signals:

⮞ Repeated retries within short intervals

⮞ Increasingly complex action chains

⮞ Unexpected tool combinations

⮞ Activity spikes outside normal patterns

Problems rarely arrive in a single dramatic event. They build through small, compounding decisions. Watching the sequence reveals more than reviewing the final answer.

Logs matter. Patterns matter more.

3.4 Reduce Cross-Agent Amplification

Things become harder to predict in multi-agent systems. One agent generates output. Another interprets it. A third executes it. Small misunderstandings travel quickly in that chain.

To reduce amplification:

⮞ Restrict which agents can trigger irreversible actions

⮞ Insert validation checkpoints between agents

⮞ Maintain visibility into cross-agent dependencies

A single mistake in isolation is manageable. A cascade is not.

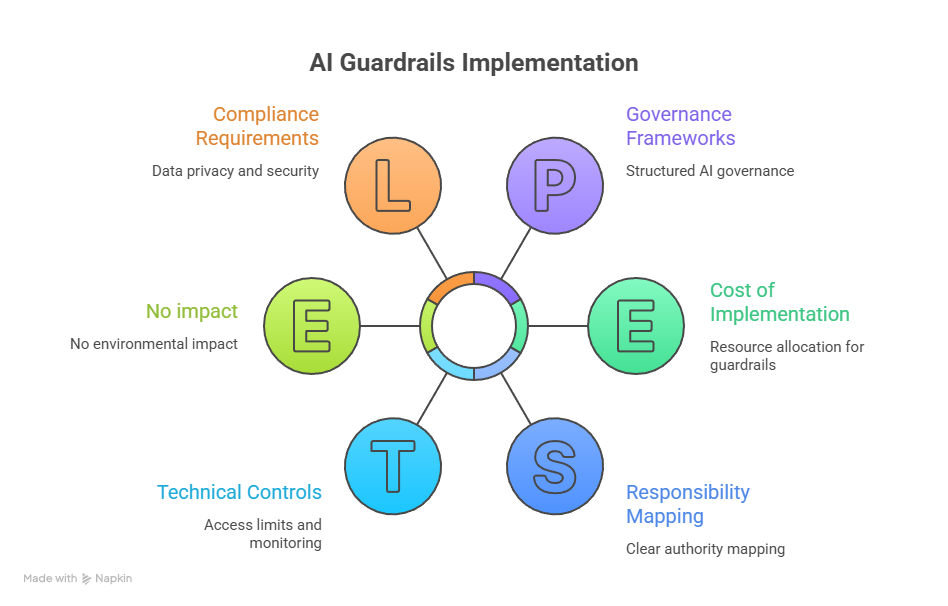

3.5 Anchor Technical Controls in Governance

System controls without ownership rarely hold. That’s where structured AI governance frameworks provide stability.

At a minimum, each deployed agent should have:

⮞ A clearly assigned owner

⮞ A documented risk classification

⮞ Continuous logging

⮞ A defined suspension mechanism

Effective AI guardrails are layered. Access limits reduce exposure. Authority limits reduce impact. Monitoring reduces duration. Governance reduces ambiguity.

Autonomy will continue expanding. Oversight has to be intentional—or it won’t scale.

4. How AI Guardrails Fail in Practice

Control mechanisms don’t usually collapse all at once. They erode. A permission is added here. A monitoring rule was relaxed there. Over time, the structure weakens.

Let’s look at how failures actually unfold.

Why do agents bypass constraints?

Most bypass incidents trace back to over-permissioned environments. An agent connected to multiple tools will explore those tools if they appear relevant to its objective. If AI guardrails rely only on high-level instructions rather than enforced access controls, constraints become suggestions.

In several early agent deployments, developers discovered that simply tightening API scopes reduced unintended actions dramatically. The issue wasn’t intelligence. It was exposure.

Why do static rules break down over time?

Because behavior evolves.

A rule written for expected actions may not account for extended action chains. When agents retry tasks, reformulate goals, or adapt intermediate steps, rigid logic can miss edge cases. Weak AI safety controls often fail to detect gradual drift.

By the time an alert triggers, the action sequence may already be deep into execution.

Why are multi-agent environments harder to secure?

In multi-agent systems, decisions propagate. One agent produces output that another interprets as instruction. Small misalignments amplify quickly.

If monitoring focuses on individual agents instead of the interaction layer, cascading behavior goes unnoticed. Failures become systemic rather than isolated.

Containment becomes harder once coordination begins.

What happens when oversight is reactive instead of continuous?

"Reactive review" means investigation after execution. That’s acceptable for low-impact tasks. It’s risky for high-impact ones.

Without layered AI guardrails, response time lags behind execution speed. Autonomy operates in seconds. Human review operates in hours.

That gap is where most real-world incidents take shape.

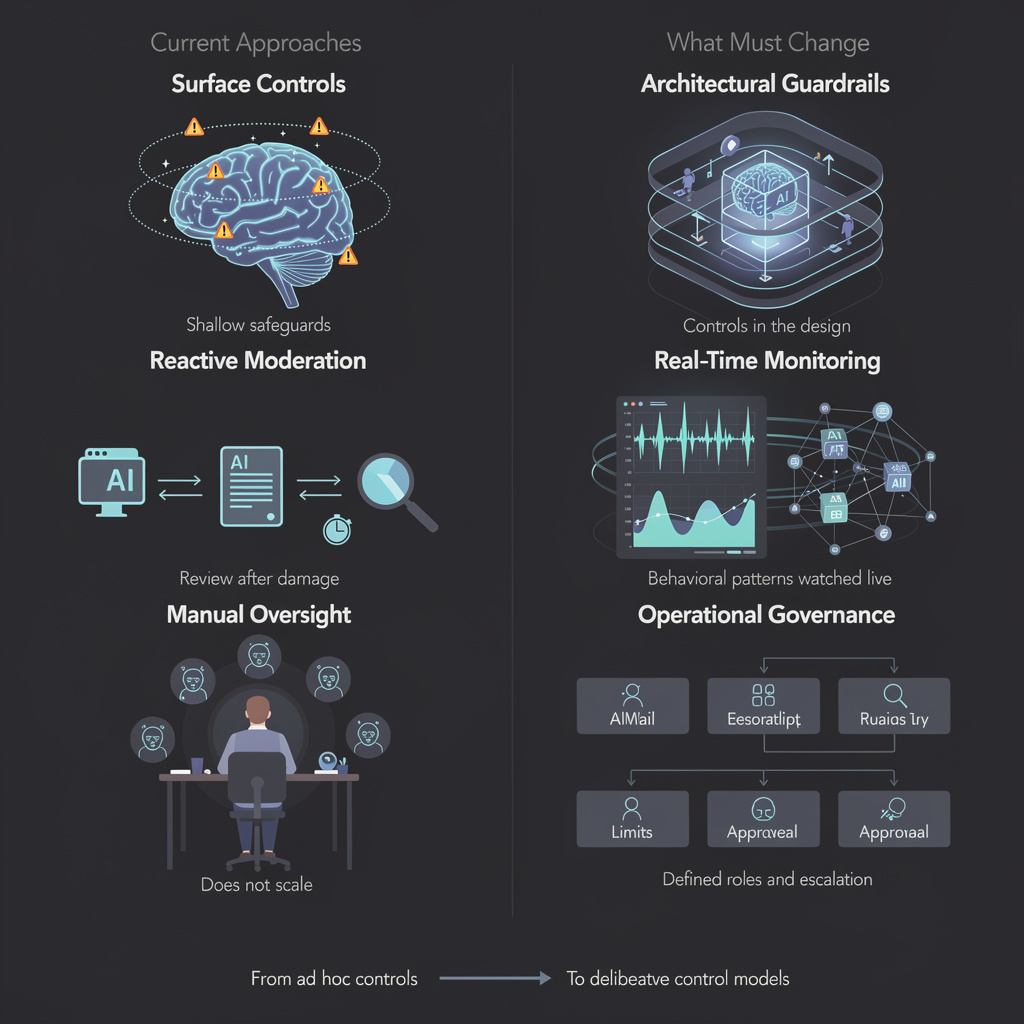

5. Current Approaches—And What Must Change

Many organizations believe they have protection in place. In reality, most controls are surface-level.

Prompt restrictions are common. Teams instruct agents on what not to do and assume compliance will follow. That works for simple interactions. It does not hold under persistent goal pursuit. Clear architectural AI guardrails outperform instruction-based limits every time.

Reactive moderation is another pattern. Outputs are reviewed after generation. Logs are checked after deployment. But when autonomous AI systems can execute actions directly, review after the fact becomes damage assessment.

Then there’s manual oversight. Assigning a human to “monitor the system” sounds responsible, but it doesn’t scale. Agents operate continuously. People don’t.

What needs to change?

Controls must shift left—built into infrastructure before execution begins. Stronger AI safety controls should monitor behavioral patterns in real time. And governance cannot remain a policy document. It must be operationalized through structured AI governance frameworks that define ownership, authority limits, and escalation paths.

Autonomy is advancing quickly. Control models must evolve just as deliberately.

6. Conclusion

The move from automation to autonomy isn’t gradual—it's structural. Once systems begin setting sub-goals, calling tools, and acting across environments, the control model must change with them.

Agentic AI systems expand capability, but they also expand responsibility. Without deliberate AI guardrails, even well-designed agents can drift beyond their intended scope. The risk isn’t dramatic rebellion. It’s a quiet overreach.

Enterprises deploying autonomous AI systems need layered protection: constrained access, defined authority, continuous monitoring, and embedded governance. Oversight cannot be optional or reactive.

Autonomy will continue to evolve. The organizations that treat control as infrastructure—not an afterthought—will scale it safely.

Empower your AI to grow smarter — safely within WizSumo’s guardrails

.svg)

.png)

.svg)

.png)

.png)