Guide to hire AI red teamer in 2026

.png)

Key Takeaways

Hiring the right AI red teamer protects your product from real-world failures.

Modern AI Red Teaming requires deep expertise across RAG, agents, and multimodal systems.

Pricing in 2026 varies widely — choose the model that fits your scope and maturity.

Strong communication and reproducible reporting matter as much as technical skill.

1. Why Hiring an AI Red Teamer Matters in 2026

AI has quietly moved into the core of many products. Customer support bots answer thousands of queries a day. Fraud systems rely on models to flag suspicious transactions. Internal copilots summarize documents and generate code. The convenience is obvious — but it also creates a new attack surface.

Unlike traditional software, AI systems can be manipulated through behavior rather than code. A carefully written prompt can override instructions. A model might leak information it was never meant to reveal. Sometimes the system simply behaves in ways its developers never expected. This is exactly the type of risk AI red teaming tries to uncover.

During AI security testing, specialists deliberately probe AI systems the same way attackers would. They test how the model reacts to adversarial prompts, attempts to bypass guardrails, and inputs designed to confuse the system.

The rise of generative AI has pushed this even further. Organizations deploying chatbots or AI assistants often run LLM red teaming exercises to see how easily a model can be manipulated.

For many companies, the key question isn’t whether these risks exist — it’s when to hire AI red teamer experts who can evaluate the system before it becomes a real AI security problem.

2. What AI Red Teamers Actually Do

People sometimes picture AI red teaming as a normal security audit with a different label. The reality is messier — and more interesting. Instead of hunting for bugs in code, the red teamer spends most of their time trying to make the AI behave in ways its creators didn’t expect.

The first step is understanding the system. What model is running behind the scenes? How do users interact with it? Is it connected to internal data or other tools? Those details matter because weaknesses in AI often appear at the edges — where prompts, APIs, and data sources meet.

From there the testing begins. In many projects the focus quickly shifts to LLM red teaming. Testers experiment with prompts that twist instructions, hide intent, or slowly guide the model into unsafe territory. Sometimes a harmless-looking question reveals internal information. Sometimes the model ignores its own safety rules after a few turns of conversation.

Another angle involves broader AI security testing. Red teamers push strange inputs into the system — incomplete data, contradictory prompts, or unusual scenarios the developers never imagined. These tests reveal how the model behaves when the environment becomes unpredictable.

What comes out of this process is rarely dramatic, but it’s valuable. Small behaviors get flagged, guardrails get tightened, and teams gain a clearer picture of their real AI security posture before regulators start asking for AI compliance testing evidence.

3. When Should a Company Hire an AI Red Teamer?

Teams usually don’t wake up one morning and say, “Let’s run AI red teaming.” The trigger is normally something practical. A new AI feature is about to launch. A security lead raises concerns. Or someone on the product team wonders what happens if a user deliberately tries to confuse the model.

Those moments are usually when organizations start looking for adversarial testing.

3.1 Before Launch

Right before releasing an AI system is a good time for AI security testing. The product is already functional, but "changes" can still be made if something risky shows up.

Red teamers approach the system like curious users who refuse to follow instructions. They try odd prompts, misleading phrasing, or "long conversation chains" to see whether the model drifts away from its rules.. Sometimes it does.

Catching those behaviors before public release saves teams from fixing problems under pressure later.

3.2 After the System Is Live

Things change once real users arrive. People experiment. They phrase questions in unexpected ways. Some actively try to break the system.

That’s why many companies run periodic LLM red teaming sessions after deployment. The goal is simple: observe how the model behaves when it’s no longer in a controlled environment.

3.3 When the AI System Influences Important Outcomes

If a model is involved in decisions — "approving payments", filtering job applicants, or guiding support workflows , the risks increase.

In those situations, organizations often hire AI red teamer specialists who can challenge the system from an attacker’s perspective.

3.4 During Governance or Risk Reviews

Security teams and regulators increasingly ask how companies test AI behavior under pressure. This is where adversarial exercises become part of AI compliance testing and broader AI security assessments.

A simple rule tends to emerge: once an AI system interacts with real users or sensitive data, it deserves deeper testing.

4. Skills to Look for When Hiring an AI Red Teamer

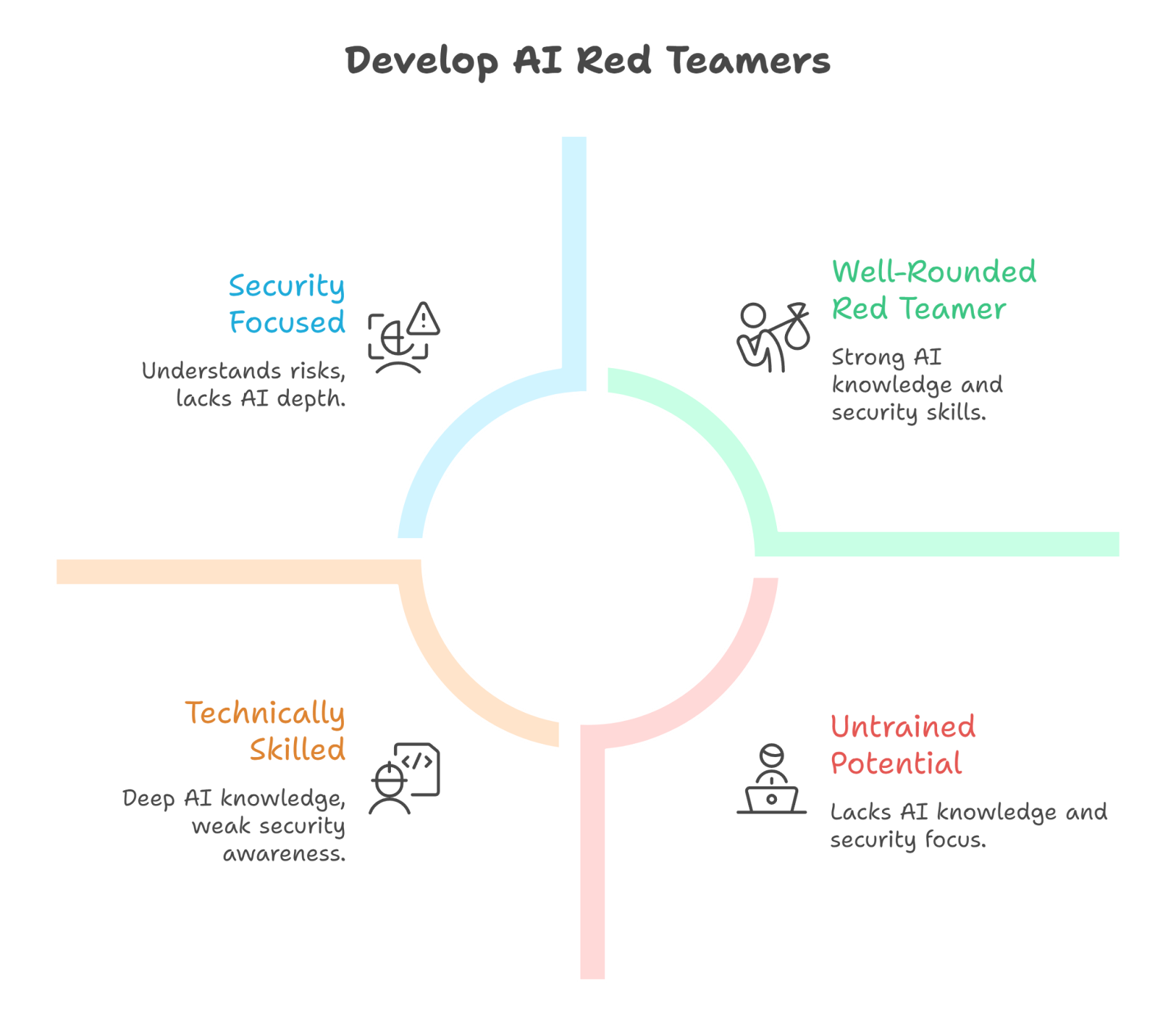

Once a company decides to run AI red teaming, the next challenge appears quickly: who should actually do it? The role is unusual. It sits somewhere between machine learning research and security testing. Someone who only knows one side of that equation often struggles to find meaningful weaknesses.

Good red teamers usually bring a mix of technical depth and curiosity. They spend a lot of time experimenting, observing how systems behave, and asking questions developers might "overlook".

4.1 Understanding How AI Systems Behave

A red teamer needs a working understanding of how AI models operate.

Not necessarily at a research level, but enough to understand prompts, model responses, and how instructions influence behavior. Without that context, it becomes difficult to design meaningful AI security testing scenarios.

4.2 Adversarial Mindset

Red teaming is less about "writing code" and more about thinking creatively about failure.

Testers try strange prompts, unusual conversation paths, and "confusing inputs" simply to observe how the model reacts. Many vulnerabilities show up only when someone intentionally pushes the system in directions the developers never considered.

4.3 Practical Experience With Generative AI

With so many companies deploying chatbots and assistants, experience in LLM red teaming has become extremely valuable.

This includes testing techniques such as prompt injection attempts, guardrail bypassing, or attempts to extract hidden information from a model.

4.4 Security Awareness

AI systems rarely exist alone. They are usually connected to APIs, tools, or databases. Because of this, strong candidates often combine adversarial testing with a broader understanding of AI security risks.

4.5 Awareness of Governance and Regulation

Organizations are increasingly expected to show that their AI systems behave responsibly.

Red teamers who understand AI compliance testing can help identify issues that might create legal or "governance concerns". When companies hire AI red teamer specialists with this mix of skills, the testing becomes far more realistic — closer to how an attacker might actually explore the system.

5. How to Evaluate an AI Red Teaming Vendor or Expert

Once teams decide they need AI red teaming, the next question is practical: who should actually run the testing? Not every security vendor understands AI systems, and not every AI expert understands adversarial testing. That gap matters.

A good starting point is asking about real experience. Vendors should be able to describe previous AI security testing work and the kinds of systems they have examined. Testing a chatbot, for instance, looks very different from testing a fraud detection model or recommendation engine.

Another signal is how they approach testing. Serious providers usually walk through their process. They might explain how they run adversarial prompts, how they structure LLM red teaming sessions, or how they simulate misuse scenarios that developers did not originally design for.

The reporting style also reveals a lot. The value of AI red teaming is not just finding strange behavior. Teams need to understand why the behavior occurs and what needs to change. Clear explanations, examples, and practical fixes make the results far more useful.

There is also a governance angle now. Many organizations run adversarial exercises alongside AI compliance testing as part of broader AI security reviews. Vendors who understand that context can help security teams translate technical findings into risk and policy discussions.

In the end, the goal isn’t simply to test an AI system. It’s to learn how it behaves under pressure — and what needs attention before those behaviors become real problems.

6. Internal vs External AI Red Teamers

After deciding to run AI red teaming, companies usually face a simple but important decision. Should they build their own team, or bring in outside specialists?

Internal teams have an obvious advantage. They already know the system. The engineers understand how the model was trained, how prompts are structured, and which parts of the product rely on the AI. That context helps when reviewing issues related to AI security, because the team can quickly trace where a risky behavior might originate.

But there’s a downside too. People who design a system tend to think about it in predictable ways. They know the intended behavior, so they naturally test around those expectations. Real attackers don’t work like that.

This is one reason organizations sometimes hire AI red teamer professionals from outside the company. External testers approach the system without the same assumptions. They try unusual prompts, odd workflows, and strange input combinations just to see how the model reacts.

External testing can also help during governance reviews. Some companies include adversarial assessments alongside AI compliance testing so leadership teams can understand how the system behaves under pressure.

In reality, many organizations use both approaches. Internal teams keep an eye on the system continuously, while external experts run deeper testing exercises when the company wants a fresh perspective on its AI red teaming efforts.

7. Conclusion

AI systems are strange creatures. They look like software, but they behave more like unpredictable collaborators. Give them the right prompt and they shine. Phrase something slightly differently and suddenly the response drifts somewhere nobody expected.

That unpredictability is exactly why AI red teaming has started appearing on security roadmaps. Instead of assuming the system will behave correctly, teams deliberately try to push it into uncomfortable territory. Odd prompts. Conflicting instructions. Long conversations that slowly bend the rules. Sometimes nothing happens. Sometimes the model does something surprising.

Those surprises are useful.

They reveal where safeguards are weak, where monitoring is missing, and where a product might behave differently once thousands of users start experimenting with it. For organizations building AI-powered tools, the safest moment to discover those issues is before they turn into public incidents.

That’s also why many companies eventually hire AI red teamer specialists rather than relying only on internal testing. Fresh eyes tend to find things the original builders didn’t think to look for.

The end result isn’t just a safer model. Teams gain a clearer understanding of their overall AI security posture and are better prepared for governance reviews, including future AI compliance testing requirements.

AI will keep evolving. Testing how it behaves under pressure will simply become part of building responsible systems.

Your AI is only as safe as the person trying to break it before the attackers do

.svg)

.png)

.svg)

.png)

.png)