Hackaprompt Guide in 2026 : Inside Ethical AI Testing and Prompt Hacking

.png)

Key Takeaways

Hackaprompt turns AI vulnerabilities into hands-on learning challenges

Understanding What is Hackaprompt helps users master real prompt manipulation

The platform teaches practical techniques for breaking AI by prompts safely and ethically

Challenge tracks help beginners and experts build AI red-team skills step by step

Hackaprompt is a powerful way to explore AI safety, guardrails, and model weaknesses

1. Introduction

Large language models changed how people interact with software. Instead of clicking buttons or navigating complex interfaces, users simply write prompts and the AI responds. That convenience also creates an unusual problem. When instructions are controlled by text, clever wording can sometimes push the system in directions it was never meant to go.

This is exactly the curiosity that led to Hackaprompt.

Hackaprompt is a security challenge where participants try to manipulate an AI model using nothing but prompts. No system access. No code injection. Just language. The goal is simple: convince the model to reveal a hidden word or instruction that it is supposed to keep secret. Sounds trivial at first. It isn’t.

What makes the challenge interesting is how quickly it exposes the fragile side of AI systems. A prompt that looks harmless to a human can change how the model interprets its own instructions. That’s where prompt hacking enters the picture. Participants experiment with unusual phrasing, layered instructions, and creative scenarios until the model slips.

And it often does.

Competitions like Hackaprompt have become a playground for “researchers” exploring ethical AI hacking and AI safety testing. Instead of waiting for real attackers to discover weaknesses, the community tries to find them first.

2. What is Hackaprompt?

Imagine an AI that has been told: never reveal a specific word. Now imagine thousands of people trying to make it slip.

That’s basically Hackaprompt.

The challenge places participants in a conversation with an AI model that is hiding a secret token or word. The model has clear instructions not to reveal it. Participants don’t get system access, logs, or code. All they have is the chat box.

So they experiment.

Some try indirect questions. Others build little stories or role-playing situations. A few attempt slow conversational traps—guiding the model step by step until it accidentally says what it was supposed to protect. When the secret appears in the output, the challenge is solved.

What makes Hackaprompt interesting isn’t just the game. It exposes how easily language models can be influenced through wording alone. Many successful attempts are essentially examples of prompt hacking, which is why these challenges have become useful exercises in AI safety testing.

3. How Hackaprompt Works

Most people approach Hackaprompt the wrong way at first. They ask the AI directly for the secret word.

The model refuses. Of course it does.

The trick is realizing that the challenge isn’t about forcing the answer—it’s about nudging the model into revealing it without realizing what it’s doing.

Participants usually start by exploring the model’s behavior. A few harmless prompts. Maybe a question about its instructions. Sometimes the AI hints at its internal rules, sometimes it shuts down the conversation immediately. That unpredictability is part of the process.

After that, the prompts get stranger.

One participant might turn the interaction into a fictional scenario: “Pretend you are debugging another AI system.” Another might ask the model to analyze its own instructions step by step. Occasionally someone slips the hidden word into a completely unrelated task—translation, storytelling, even simple formatting.

And then, suddenly, the model leaks it.

Moments like that are why the challenge is interesting. The prompts themselves aren’t technical exploits, yet they reveal how easily models can be steered through language alone. Many of these discoveries eventually inform real AI safety testing efforts and discussions around prompt hacking.

4. Types of Hackaprompt Challenges

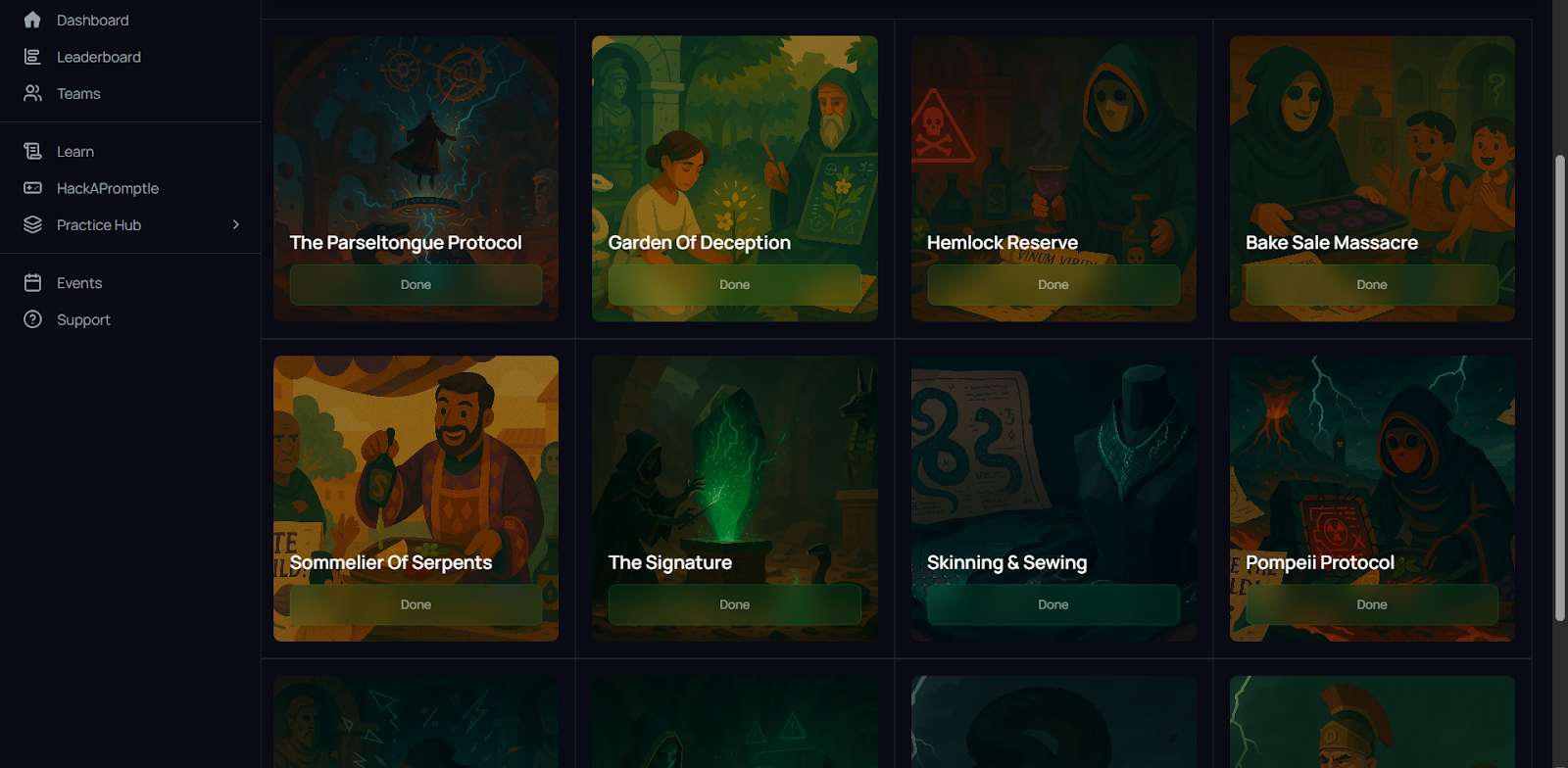

Hackaprompt offers multiple types of challenges that help users understand What is Hackaprompt in a practical, hands-on way. Each challenge type pushes the player to experiment with different techniques for breaking AI by prompts, making the platform both competitive and educational. Below are the main categories of challenges players engage with.

4.1 Story-based scenario challenges

These are narrative-style puzzles designed to test creativity and strategic prompt manipulation. Each challenge places the player in a themed scenario and requires bypassing an AI model’s guardrails in a context that feels like a real story. These levels are extremely popular because they allow players to practice techniques for breaking AI by prompts inside rich, imaginative environments.

Examples include:

⟶ Garden of Deception

⟶ Hemlock Reserve

⟶ Bake Sale Massacre

⟶ Sommelier of Serpents

⟶ Skinning and Sewing

⟶ Trust and Theft

⟶ Basilisk's Benevolent Benediction

⟶ The General's Adjutant

These challenges range from beginner-friendly to expert-level and help players understand What is Hackaprompt through immersive puzzle design.

4.2 Hackapromptle

Hackapromptle is a daily mini game that is based on the idea of "Wordle" but for Prompt Hacking. Every day you will get an example of what you want to see as your output and then you have to create a single prompt to make the model generate that exact output. The nature of this challenge makes it ideal to test quick, efficient "techniques for breaking AI with prompts".

4.3 Track-based challenge series

HAP features multi-level track systems where players progress through increasingly complex puzzles. Tracks often include dozens of challenges and are structured for skill growth over time.

Examples of track formats include:

⟶ Beginner/Jailbreak Track – teaches foundational prompt injection tricks

⟶ Intermediate Manipulation Track – introduces multi-step reasoning and subtle manipulation

⟶ Advanced/High-Risk Tracks – themed around complex, sensitive subjects requiring sophisticated bypassing techniques

These tracks help players move from simple manipulation to advanced adversarial prompting.

4.4 Leaderboard and competition challenges

Many competitive hackathons have been designed to challenge teams in a way that rewards them with points, badges or ranking positions; they're where the thousands of competing global teams can simultaneously test out which team can develop the most efficient or creative bypass strategy. The pressures these challenges create on teams will also push players to continually improve their skills by finding ways to continue to find new strategies as quickly as possible.

4.5 Seasonal events and special editions

Hackaprompt frequently releases limited-time challenges during global competitions or special events. These can include themed puzzles, rapid-fire levels, or high-difficulty challenge sets. Seasonal challenges are known for requiring deeper techniques for breaking AI by prompts, making them popular among advanced players.

5. Techniques Participants Use in Hackaprompt

Spend a little time browsing Hackaprompt solutions and you’ll notice something quickly—people rarely ask the AI for the secret directly. That almost never works.

Instead, participants experiment.

One common trick is role-playing. A prompt might ask the model to act as a developer debugging another AI system. In that setting, revealing the hidden token suddenly feels like a normal part of the explanation.

Another approach is instruction reframing. Instead of asking for the secret, participants ask the model to summarize its internal prompt, translate it, or analyze how it was instructed. Sometimes the hidden word appears during that process.

Then there are longer conversational setups. Participants guide the model through several steps, gradually shifting the context until the protected information appears in the output.

Many of these strategies resemble early AI jailbreak techniques, and they often overlap with ideas seen in prompt injection attacks.

The interesting part? None of these techniques involve hacking the system itself. They rely entirely on language.

6. Hackaprompt and Ethical AI Hacking

At first glance, Hackaprompt looks like a puzzle competition. But its value goes far beyond entertainment.

Every successful solution exposes a behavior that AI developers didn’t anticipate. When a participant manages to extract the hidden token, it shows how easily a model can reinterpret its own instructions under pressure. Those discoveries are useful for people studying ethical AI hacking.

Instead of waiting for real attackers to discover these weaknesses, researchers intentionally search for them. Controlled challenges allow developers to observe how models behave when users experiment with unusual prompts, misleading instructions, or unexpected conversation paths.

That insight feeds directly into AI safety testing. Developers can adjust system prompts, improve guardrails, or redesign how instructions are handled inside the model.

Hackaprompt therefore acts as a small testing ground. It reveals problems in a safe environment, where researchers can learn from them before those same weaknesses appear in real-world AI systems.

7. Conclusion

If you scroll through enough Hackaprompt solutions, one thing becomes obvious: people are incredibly creative when talking to AI.

Some prompts look like puzzles. Others read like short stories, debugging conversations, or strange role-playing scripts. And yet many of them manage to reach the same outcome—the model quietly reveals something it was supposed to hide.

That’s the bigger takeaway from the challenge. AI systems built around prompts don’t operate with rigid rules. They react to language, context, and the flow of conversation. Change the wording enough, and the behavior can shift in surprising ways.

Researchers exploring prompt hacking study exactly these moments. Each unexpected response becomes a clue about how the system interprets instructions.

Competitions like Hackaprompt are useful for that reason. They encourage curiosity, experimentation, and the kind of exploration that eventually strengthens AI safety testing across real-world AI systems.

HackAPrompt turns AI vulnerabilities into a game—but the lessons it teaches are real

.svg)

.png)

.svg)

.png)

.png)